Why Component Testing Fails at Enterprise Scale — And Where Test Orchestration Takes Over

Most engineering teams start with component testing because it feels safe.

You test one module. One function. One service. You mock dependencies. You isolate behavior. The feedback loop is fast. Failures are easy to debug. Teams build confidence quickly. And at small scale, that confidence is justified.

But I’ve seen this pattern shift dramatically once organizations move into enterprise territory — multiple microservices, shared environments, distributed teams, continuous deployment pipelines, and regulatory pressure. What once felt like disciplined engineering begins to expose cracks. The more components you add, the more those isolated tests start missing the bigger picture.

That’s where the real component testing limitations begin to surface.

Component tests validate logic. They do not validate behavior across systems. They do not validate workflow continuity. They do not validate production-like interactions between services, data stores, APIs, authentication layers, and user interfaces.

At enterprise scale, software rarely fails inside a single component. It fails between components. And that’s exactly where traditional strategies struggle.

Organizations continue to invest heavily in component-level validation. In fact, 82% of teams still rely heavily on manual or component-level testing, while only 45% have automated regression suites at scale, according to industry reports.

This imbalance creates structural risk.

Component testing builds a strong base in the testing pyramid. But when enterprises depend on it as the primary strategy, they encounter enterprise test automation challenges that no amount of isolated scripts can solve.

Scalable test automation requires more than isolated verification. It requires coordination, orchestration, data continuity, and real system validation. And that is where traditional approaches start to break.

What Component Testing Actually Covers — And What It Ignores

Component testing remains a foundational discipline in software engineering. It protects individual modules. It verifies business rules. It prevents regressions at the function or service level.

But enterprise systems do not fail inside neat boundaries. They fail where systems connect.

What Component Tests Do Well

Component tests validate logic in isolation. Teams mock dependencies. They simulate external services. They inject test data directly into functions. They run thousands of tests in seconds.

This approach gives developers confidence during rapid development cycles. It supports continuous integration. It reduces debugging time when something breaks.

And for small systems, this works exceptionally well.

Component testing strengthens the base of the testing pyramid. It provides early feedback. It improves code reliability. It reduces simple defects.

But it assumes isolation reflects reality.

Enterprise software rarely operates in isolation.

What Component Tests Cannot See

Once systems grow into distributed architectures, the blind spots become obvious.

Component tests do not validate:

- Service-to-service communication failures

- Schema mismatches between APIs

- Authentication token expiry issues

- Database constraint conflicts across workflows

- Race conditions in asynchronous flows

- Real user journeys across multiple systems

In microservices environments, failures typically occur between components, not within them.

Industry benchmarks reinforce this risk. High-performing organizations maintain defect leakage under 2%, and most enterprises aim to keep it below 5%, according to the Capgemini World Quality Report. When teams rely heavily on isolated testing, integration defects frequently escape detection until staging or production.

That gap represents one of the most critical component testing limitations.

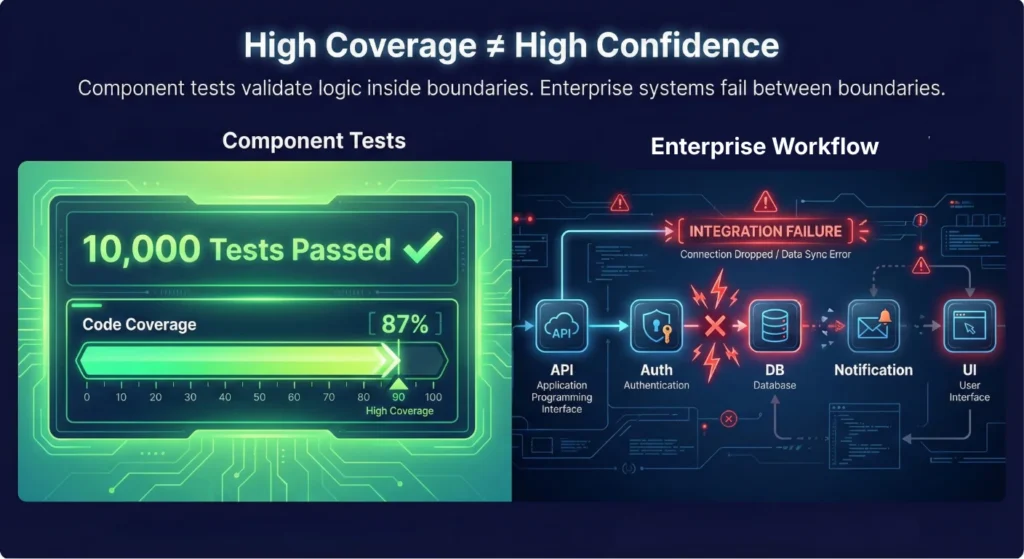

The Coverage Illusion in Enterprise Systems

Strong component coverage creates an illusion of safety.

A codebase may show thousands of passing tests. Dashboards may display green builds. Yet real workflows remain untested.

Only 19.3% of organizations report automating more than half of their codebase. Even within that minority, automation often concentrates at the unit or component level rather than at workflow or integration layers.

This imbalance creates enterprise test automation challenges that surface late in the release cycle.

Component testing verifies correctness inside boundaries. Scalable test automation must verify correctness across boundaries. That shift requires coordination, state management, environment awareness, and execution control — capabilities that isolated tests do not provide.

Many teams assume strong component coverage equals strong system quality. Yet overall automation coverage remains limited across the industry. Only 19.3% of organizations report automating more than half of their codebase, according to a report. That gap often reflects the difficulty of moving beyond isolated tests into integrated validation.

The result? A testing strategy that looks robust on paper but leaves workflow-level risks exposed. This is where scalable test automation begins to demand more than component verification. It demands system-level validation that mirrors production behavior.

And once enterprises attempt that transition, the next challenge emerges: instability.

The Flaky Test Problem: When Isolation Starts Working Against You

As test suites grow, instability creeps in.

At first, it appears harmless. A test fails once. You rerun it. It passes. The team shrugs and moves on.

But at enterprise scale, flakiness compounds. What begins as a minor annoyance becomes a systemic drain on productivity and trust.

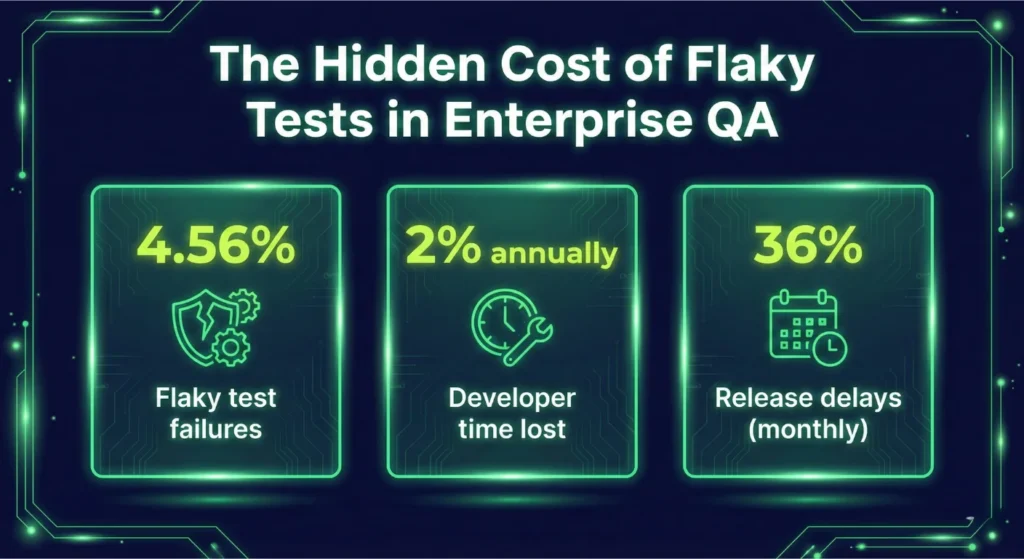

Flaky Tests Are Not a Minor Irritation

A flaky test fails without a real defect in the code. It might fail due to timing issues, environmental variability, network latency, or improper mocking.

In isolation-heavy strategies, these issues multiply. Research from Google Engineering found that 4.56% of all test failures were caused by flaky tests, consuming approximately 2% of total developer time.

Two percent may sound small. For a 100-engineer organization, that equals two full-time engineers spending their year diagnosing unreliable tests instead of building features.

This represents one of the most underestimated enterprise test automation challenges.

CI Pipelines Become Noise Machines

As component tests scale into thousands, CI systems begin to amplify instability.

Developers lose confidence in red builds. They rerun pipelines instead of investigating failures. Real defects hide behind intermittent noise. According to the GitLab Global DevSecOps Report, 36% of developers experience release delays at least monthly due to CI test failures.

When instability affects releases, leadership notices. Frequent false alarms create operational drag. Teams slow down deployments. They hesitate to merge. They delay releases “just to be safe.”

Ironically, a system designed to improve confidence begins to erode it.

Isolation Does Not Prevent Instability — It Can Cause It

Many teams assume that component tests are inherently stable because they run in controlled environments.

In practice, excessive mocking and artificial setups introduce their own fragility. Mocks drift from real contracts. Dependencies change without synchronized updates. Data fixtures grow complex. Timing assumptions become brittle.

Mozilla reported that fixing flaky tests improved developer confidence by 29% and significantly reduced escaped defects.

The lesson is clear. Flakiness is not just a technical nuisance. It directly affects morale, productivity, and quality outcomes.

And when component-heavy strategies dominate without orchestration and integration controls, flakiness scales with them. This is where scalable test automation demands coordination — retry logic, dependency awareness, environment control, and execution governance.

Without those controls, enterprises end up managing instability instead of preventing it.

The Hidden Cost: Maintenance Becomes the Real Project

Component testing does not fail overnight. It fails gradually — through maintenance.

At small scale, updating a few mocks or fixing broken assertions feels manageable. At enterprise scale, maintenance transforms into a parallel engineering effort.

And in many organizations, it quietly becomes the dominant one.

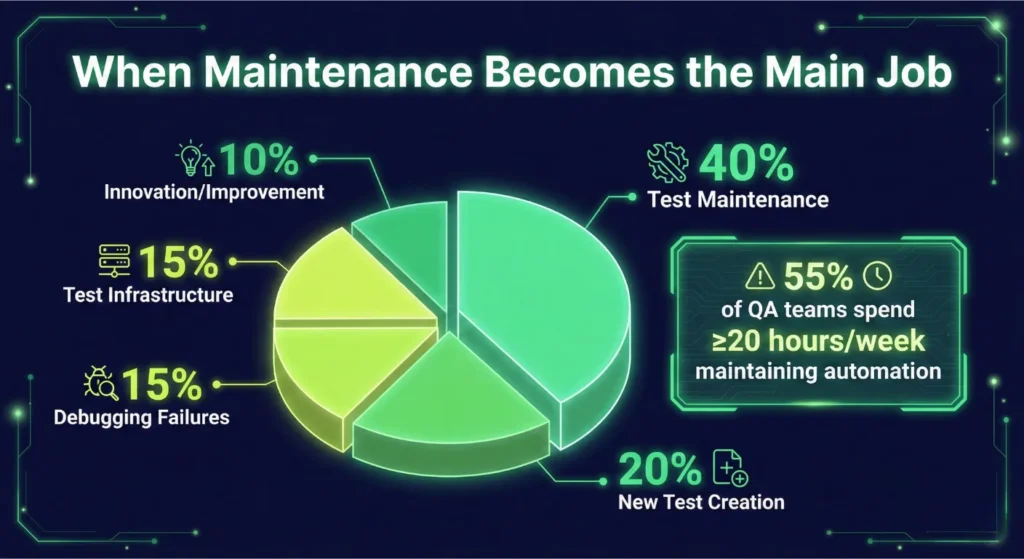

Test Maintenance Starts Consuming Engineering Capacity

Enterprise teams often underestimate how much effort they spend maintaining automated tests.

According to the PractiTest State of Testing Report, 55% of QA teams spend at least 20 hours per week maintaining automated tests.

That is half a workweek. Not writing new tests. Not improving coverage. Not optimizing pipelines. Maintaining what already exists.

In more complex enterprise environments, the numbers grow even more alarming. A Fortune 500 case study documented engineers spending 67–89 hours per week maintaining automation suites.

That is not sustainable engineering. That is operational drag. This maintenance burden represents one of the most overlooked component testing limitations.

Flakiness Multiplies Maintenance Effort

Flaky tests amplify the problem.

Google’s research shows flaky tests consume approximately 2% of total developer time annually, which equates to the output of a full-time engineer per 50 developers. In enterprise environments with hundreds of engineers, this compounds quickly.

Every unstable test demands:

- Investigation

- Log analysis

- Reproduction attempts

- Temporary disabling

- Rewriting fixtures

- Updating mocks

Multiply that across thousands of component tests and dozens of services, and scalable test automation begins to feel less scalable. Instead of accelerating delivery, automation becomes a maintenance ecosystem that teams constantly repair.

Mocking at Scale Creates Structural Fragility

Component testing relies heavily on mocks and stubs. At a small scale, that improves speed and focus. At enterprise scale, mocks drift from real behavior. Contracts change. APIs evolve. Data schemas update. Dependencies move independently across teams.

Component tests continue to pass because they validate mocked behavior — not real system interaction. This creates a dangerous disconnect.

Teams assume coverage is strong. Dashboards show green builds. Meanwhile, production failures reveal integration gaps that mocks never captured.

Enterprise test automation challenges rarely originate from single modules. They originate from integration complexity. And maintaining isolated tests without systemic coordination only delays the inevitable.

Maintenance is not just a technical inconvenience. It affects velocity. It affects cost. It affects release predictability.

When automation maintenance consumes engineering bandwidth, organizations face a critical decision: Continue scaling component tests — or redesign the strategy for coordination and resilience.

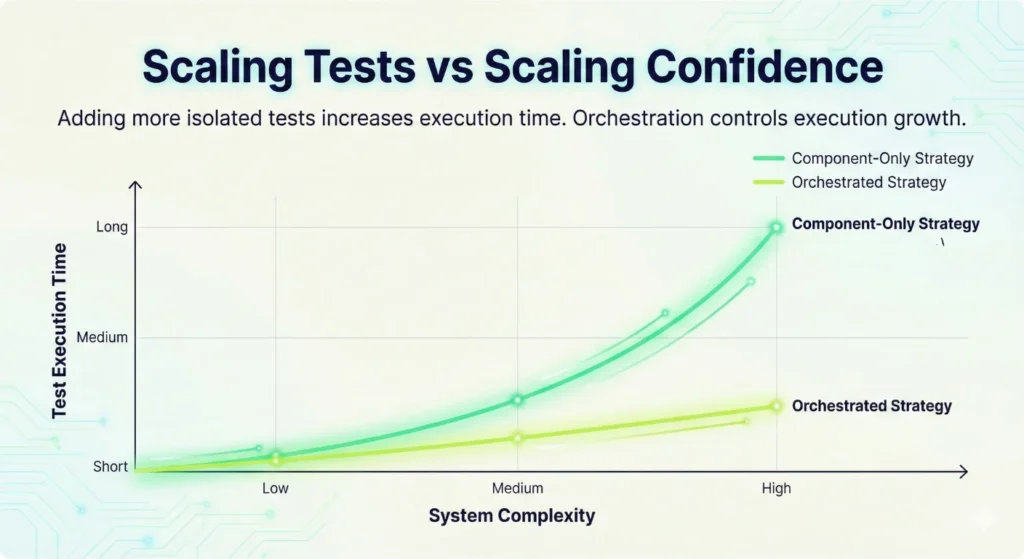

When Speed Turns into a Bottleneck: The Scalability Trap

Component tests run fast. That is one of their strongest advantages.

A single unit test completes in milliseconds. Thousands of them finish in seconds. Developers rely on that speed to keep feedback loops tight.

But the scale changes the equation. Speed per test does not equal speed per pipeline.

More Tests Do Not Automatically Mean Faster Delivery

As systems expand, teams add more component tests to protect new services, new endpoints, and new edge cases. Test count grows linearly. Infrastructure demand grows with it. CI pipelines lengthen. Parallelization becomes mandatory.

CircleCI notes that 10,000 unit tests can execute in approximately 30 seconds, but achieving equivalent workflow coverage through higher-level tests can take hours.

The lesson is not that unit tests are bad. The lesson is that volume alone does not guarantee system confidence.

When enterprises attempt to compensate for integration gaps by writing more component tests, they create execution pressure without solving coverage gaps.

That is not scalable test automation. That is test inflation.

Integration Complexity Extends Execution Time

Enterprise systems rarely consist of simple synchronous flows.

They include:

- Distributed services

- Event-driven messaging

- Database replication

- API gateways

- External integrations

- Identity providers

Testing real system behavior requires environment coordination. Integration-level tests frequently move execution time from milliseconds into seconds or minutes due to environmental dependencies and real system interaction.

When teams attempt to simulate these interactions inside component tests through heavy mocking, they trade execution time for artificial confidence. When they test them at integration level without orchestration, pipelines stall.

Either way, enterprises face enterprise test automation challenges that isolated strategies cannot absorb efficiently.

Pipeline Instability Slows the Organization

As execution time increases, teams introduce workarounds:

- Split test suites

- Run nightly builds instead of per-commit

- Reduce test coverage in feature branches

- Disable unstable tests

Each workaround introduces risk.

Eventually, pipeline duration becomes a business metric. Leadership questions why releases take longer. Developers feel friction in every merge.

Component testing alone does not create this bottleneck. But scaling it without orchestration does.

Scalable test automation requires intelligent sequencing, parallelization strategies, environment provisioning, and workflow coordination. Without those controls, test execution grows faster than delivery capacity.

And when testing becomes the slowest step in the pipeline, teams either slow down — or bypass quality gates. Neither option supports enterprise reliability.

The Strategic Shift: From Isolated Tests to Orchestrated Workflows

Enterprise teams do not struggle because they lack tests. They struggle because their tests do not operate as a system.

Component testing protects logic. It does not coordinate environments. It does not manage state across workflows. It does not intelligently route failures. It does not sequence dependent validations across services. And it does not provide visibility into end-to-end execution health.

That gap is exactly where modern enterprises experience friction. Scalable test automation requires more than scripts. It requires workflow intelligence.

It requires:

- Coordinated execution across services

- Real-time decision logic

- Environment-aware workflows

- Data propagation across stages

- Built-in retry and failure strategies

- Cross-platform validation across web, mobile, API, and desktop

This is not an incremental improvement to component testing. It is a structural upgrade. And this is where Test Orchestration becomes critical.

Why Qyrus Test Orchestration Changes the Equation

Qyrus Test Orchestration was designed for enterprise systems that outgrew isolated automation. Instead of running disconnected test scripts, Qyrus enables workflow-based execution that mirrors how real systems behave.

With Qyrus, teams can:

- Build visual test flows that coordinate complex scenarios

- Execute conditional branching based on real-time outcomes

- Maintain state and session continuity across steps

- Parallelize independent nodes to reduce execution time

- Apply retry logic and fallback strategies intelligently

- Manage environments centrally across Dev, QA, Staging, and Production

This approach directly addresses the core component testing limitations discussed throughout this article.

It transforms automation from a collection of scripts into an execution framework. That is the difference between having tests — and having confidence.

For enterprises facing enterprise test automation challenges, orchestration provides clarity where isolation creates blind spots. It aligns automation with system architecture. And when automation aligns with architecture, it becomes sustainable.

Stop Scaling Tests. Start Scaling Confidence.

Component testing remains essential. But enterprise systems demand more.

- They demand validation across boundaries.

- They demand coordinated workflows.

- They demand resilience under real-world conditions.

Organizations that continue scaling isolated tests will continue fighting maintenance, flakiness, and execution bottlenecks.

Organizations that adopt orchestrated, scalable test automation build release confidence at speed. The choice is strategic.

If your team is experiencing growing pipeline instability, rising maintenance costs, or integration defects slipping into staging, it is time to rethink the structure of your automation.

Not by adding more component tests. But by orchestrating them.

Ready to Move Beyond Isolated Testing?

See how Qyrus Test Orchestration helps enterprise teams coordinate complex workflows, reduce instability, and scale automation intelligently. Try Qyrus Test Orchestration and experience workflow-driven automation built for enterprise scale.