Flaky tests are a reporting problem, not just an engineering problem

Most teams treat a flaky test as code to be fixed. It fails intermittently, someone retries it, maybe adds a wait, maybe quarantines it, maybe deletes it. The fix is the focus. The measurement isn’t.

That’s how flakiness stays permanent. You can’t reliably fix what you can’t see clearly. And in most CI pipelines, flaky and broken tests look identical on the surface: both produce red runs, both block merges, both cost engineering time. The difference only shows up in patterns, and patterns only show up in reporting.

Before flakiness is an engineering problem, it’s a measurement problem. The scale of that problem is bigger than most teams realize. Google’s engineering team found that 84% of transitions from passing to failing in their CI were flaky, not real bugs.

Bitrise’s Mobile Insights 2025 report, built on over 10 million builds across 3.5 years, found that the proportion of teams experiencing flakiness grew from 10% in 2022 to 26% in 2025. The problem is not going away, and retry loops are not a strategy for dealing with it.

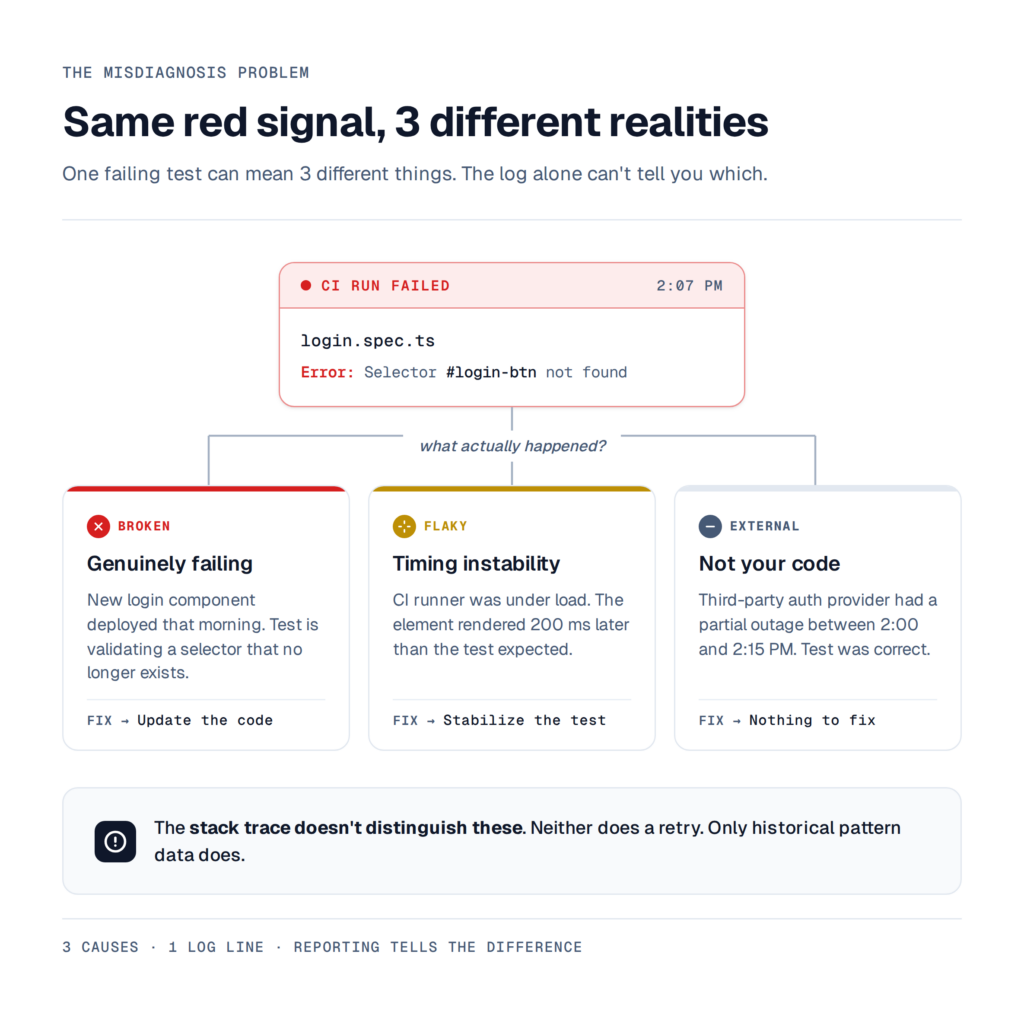

The misdiagnosis problem

Picture a login test that fails on a Tuesday afternoon. The stack trace says the element wasn’t found. What actually happened?

- Option 1: the app deployed a new login component that morning, and the test is genuinely broken.

- Option 2: the CI runner was under load and the element rendered 200 ms later than usual, and the test is flaky.

- Option 3: a third-party auth provider had a partial outage between 2:00 and 2:15 PM, and the test wasn’t wrong at all.

The raw failure log tells you none of this. A retry might pass and make the question go away, but it doesn’t answer it. The next time that test fails, you’re back at square one, reading the same stack trace, making the same guess.

This is what happens when flakiness is treated as an engineering artifact instead of a data artifact. Each failure gets handled in isolation. No history accumulates. No pattern surfaces. Engineers build intuition about which tests are “known flaky,” but that intuition lives in someone’s head, not in the pipeline.

What reporting intelligence actually means for flaky tests

Flaky test detection is not the statement “the test failed.” It’s the statement “this test has a 14% failure rate over the last 200 runs, failures cluster on Chromium on Linux CI, they spike between 2 and 4 PM UTC, and 80% of them share the same error signature.” That’s a different level of information. It turns a red test into a diagnosis.

Proper flaky test management requires tracking a small number of things consistently. The flake rate over time, per test and per branch, because a test at 2% flake rate is a quarantine candidate and a test at 30% is broken.

Failure signatures, where stack traces and error messages get normalized and grouped, because 50 failures sharing one signature is 1 bug, not 50. Environment deltas, because a test that flakes only on Chromium is telling you something specific about the browser, not about the assertion.

And a historical trend, so that when a test starts flaking on March 14 after a dependency bump, the correlation is obvious within minutes rather than weeks.

None of this is a fix. It’s a diagnosis. Without the diagnosis, every fix is a guess.

Atlassian’s engineering team eventually built Flakinator, a dedicated platform that ingests test run data, detects flakes across millions of daily executions, and automatically assigns tickets to code owners.

Slack’s engineering team documented the same pattern: auto-detection, suppression, regular re-evaluation of suppressed tests, notifications to feature teams. Neither of those is a test fix. They’re both reporting layers. The fixes happen downstream of the visibility.

The gap between healing and understanding

There’s a category of tooling built around fixing flakiness automatically: self-healing selectors, auto-retries, AI-suggested patches. These are useful. They’re also, by design, downstream of the question that matters most: should this test heal, or should it fail?

A self-healing selector that adapts from #login-btn to #signin-btn is helpful when the intent is the same. It becomes a bug-hider if a developer renamed the login flow to a separate page and the test is now validating the wrong thing. From the outside, both look like a green test.

Auto-retries carry the same risk. A retry that succeeds on the second attempt can mean the test is legitimately flaky and the retry absorbed it.

It can also mean there’s a race condition in production that affects 1 in 8 real users. Without reporting that tracks retry behavior over time, both outcomes produce the same green checkmark and the CI run looks healthy.

Healing without understanding converts a visible problem into an invisible one. The test suite looks stronger. The underlying signal is gone.

What better flaky test management looks like in practice

The operational version of this is unglamorous. It’s a dashboard, not a feature. But it’s the dashboard that makes every other flakiness strategy coherent.

For a Playwright team serious about test stability, five signals matter more than the rest.

The first is a per-test flake rate score, updated on every CI run and visible per branch and environment. Then there’s failure grouping by error signature, so 50 failures sharing a root cause collapse into 1 diagnosis instead of 50 tickets.

Trace and video artifacts get attached to every failing run, not just the most recent one, so failures can be compared across days. Environment comparison reveals how the same test behaves on Chromium versus Firefox, CI versus local, or Ubuntu versus macOS.

And a timeline view makes a test that starts flaking after a dependency bump obvious within minutes, not weeks later.

This is why dedicated Playwright test reporting exists as a tooling category with tools like TestDino being the leader. Generic CI log viewers are built to show you the last run. Flaky test detection is a question about the last 200 runs. Different question, different tool.

Once this layer exists, every decision downstream becomes cheaper. Should this test be quarantined? Check its flake rate. Should this selector be auto-healed? Check whether its failure signature is consistent with a refactor or with a timing issue. Should this merge be blocked? Check whether the failure cluster is environment-specific or universal.

Without the layer, every decision is a judgment call made on a single data point.

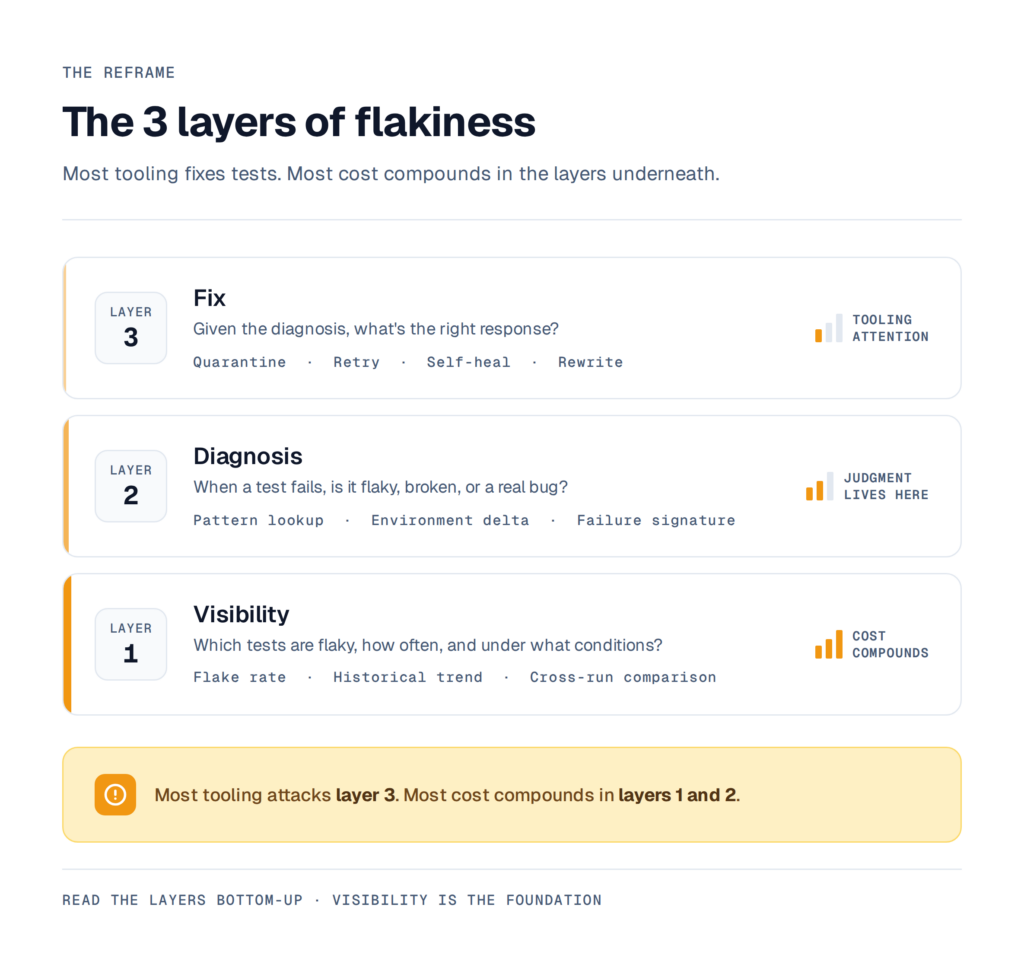

The reframe

Flakiness isn’t really one problem. It’s three, layered on top of each other. There’s a visibility problem: which tests are actually flaky, how often, and under what conditions? There’s a diagnosis problem: when a test fails, is it flaky, broken, or pointing at a real bug? And there’s a fix problem: given the diagnosis, what’s the right response?

Most tooling attacks the third layer. Most teams spend their time there too. But the first two layers are where the compounding cost lives. A test suite with clean reporting can tolerate a lot of flakes, because the team knows what they are.

A test suite without reporting can have 5 genuinely flaky tests and still feel like a constant fire, because nobody can distinguish the signal from the noise.

Test stability is the ability to explain every flake the moment it happens. A suite with 20 flaky tests and clean reporting can still be considered stable, because the team knows what each one is.

A suite with 3 flaky tests and no reporting is not stable at all, because the team is guessing. That explanation doesn’t come from the test code. It comes from the reporting layer underneath it.

Self-healing fixes tests. Reporting fixes the understanding of tests. Only one of those scales.