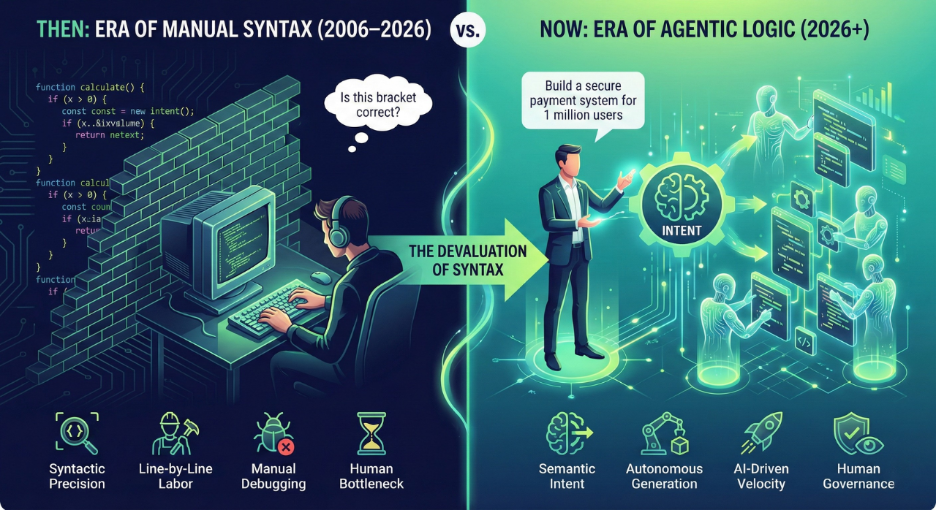

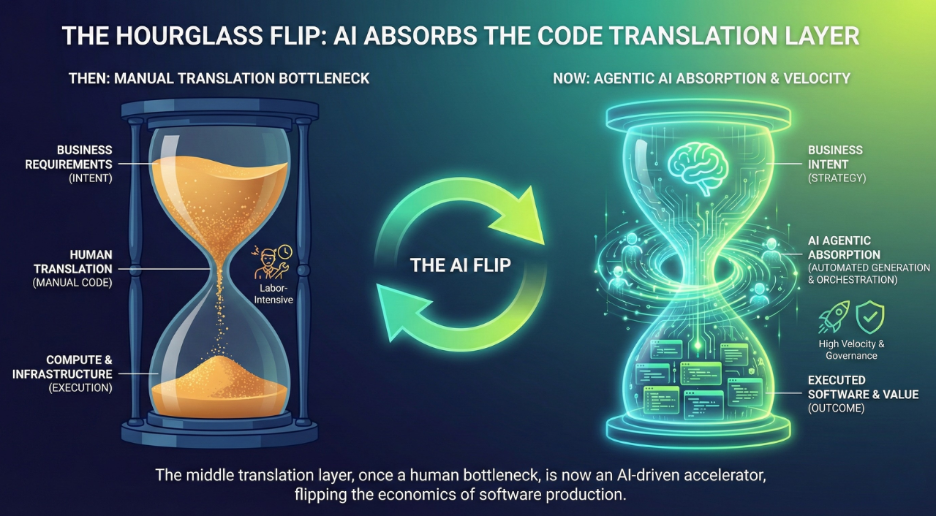

Modern software development moves faster than most QA teams can validate. Generative AI now contributes directly to code creation, and CI/CD pipelines push changes into production at high frequency. Testing has not kept up. Teams still depend on script-heavy automation, fragmented tools, and manual validation cycles. As release velocity increases, validation becomes the primary enterprise bottleneck.

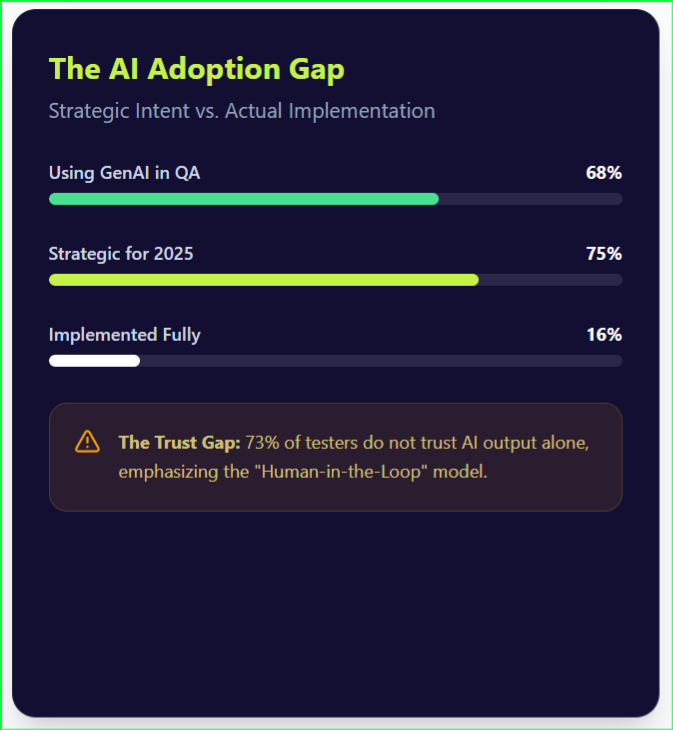

This widening velocity gap between development and validation is forcing enterprises to rethink how quality is engineered. Early enterprise AI adoption focused on chat-based assistance. These systems generated answers and suggested code in isolation. They did not execute end-to-end workflows. They required constant human direction and offered limited impact on actual delivery speed.

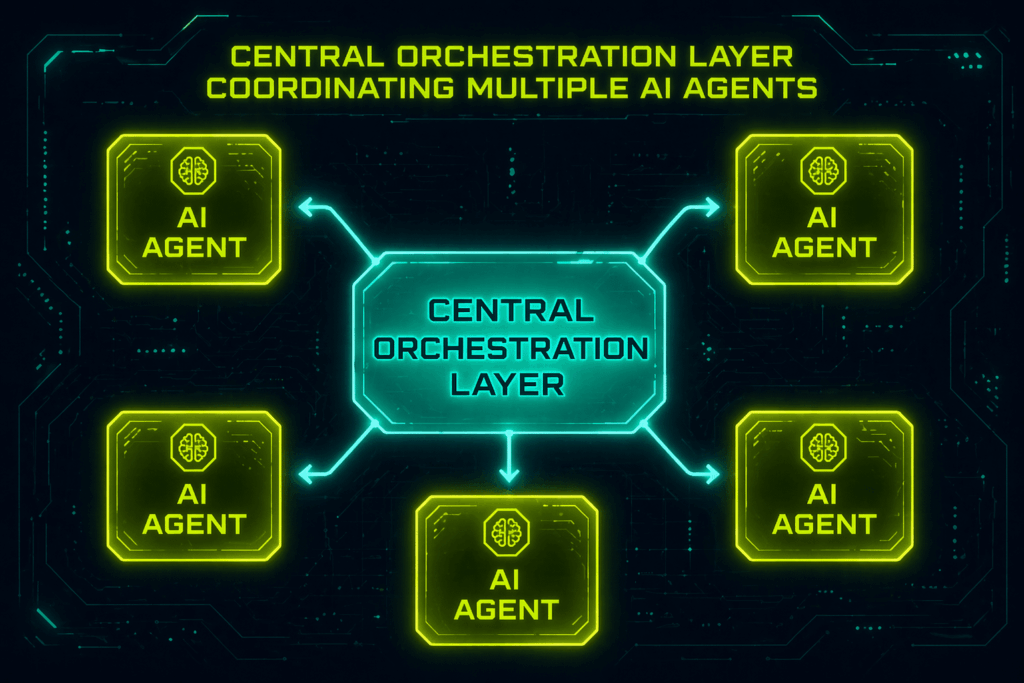

An agentic orchestration platform changes that model. It introduces a coordinated execution layer that connects development activity to continuous validation. Instead of isolated tools, it enables AI agent coordination across the testing lifecycle. Autonomous agents generate tests, execute them, and maintain coverage without manual intervention. This forward-looking framing of a self-orchestrating QA system ensures quality keeps pace with the speed of innovation.

What Is an Agentic Orchestration Platform?

Legacy test automation often behaves like a house of cards. A minor UI change can break entire regression suites, forcing teams into constant maintenance. This platform replaces that fragile model with a resilient, AI-driven coordination layer designed for continuous adaptation.

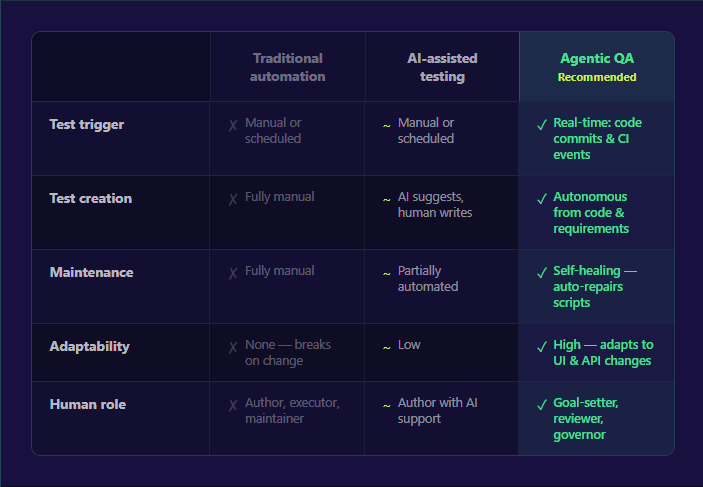

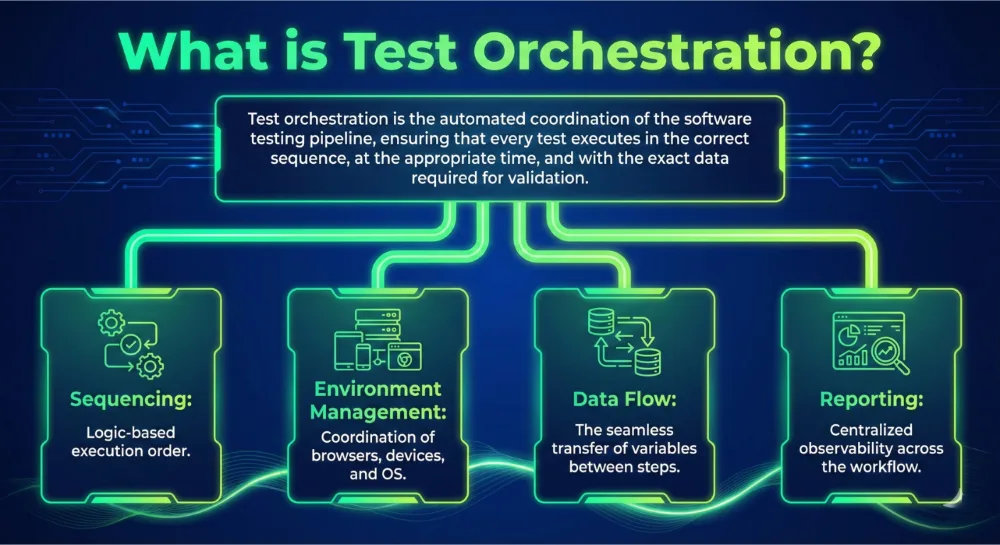

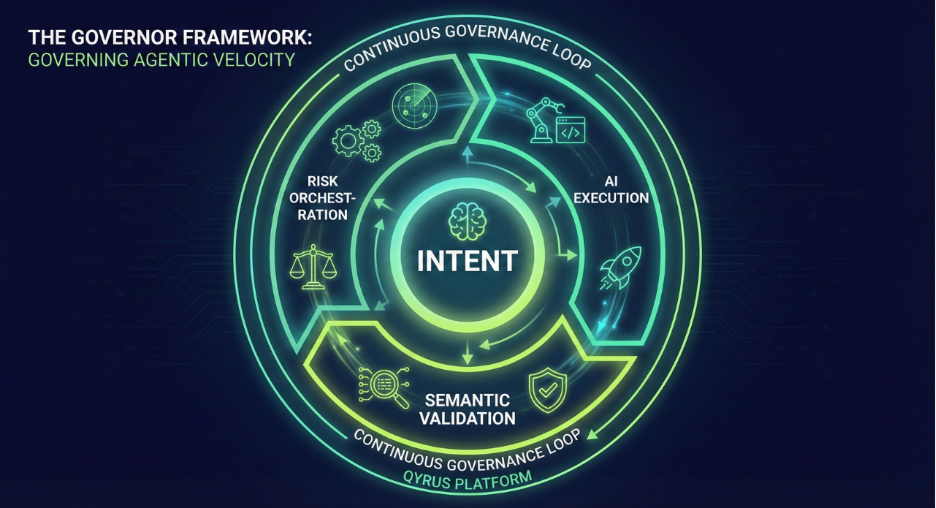

An agentic orchestration platform is a centralized execution layer that coordinates autonomous AI agents, enterprise systems, and workflows. It dynamically orchestrates test generation, execution, validation, and reporting based on real-time system changes. This marks a clear shift from rules-based automation to adaptive, agentic workflows. Traditional testing depends on anticipating every failure path. In contrast, an orchestration platform enables objective-based testing. Teams define what needs to be validated, and the system determines how to test it.

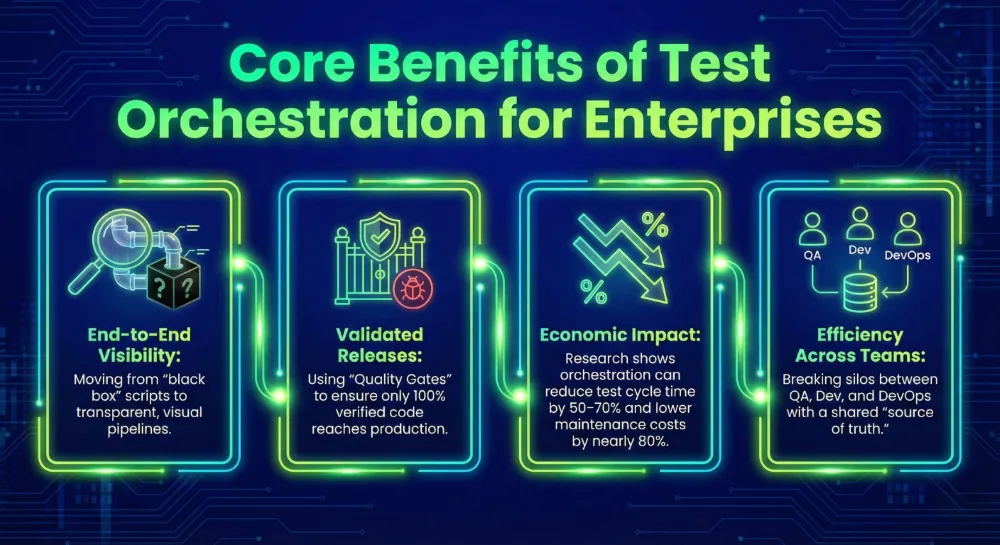

Specialized agents operate with defined roles within this multi-agent system. Some focus on UI validation, while others handle API virtualization or exploratory testing. These agents execute in parallel and collaborate to handle complex workflows that span multiple systems. The orchestration layer synchronizes their activities and integrates them with CI/CD pipelines and broader enterprise systems. This shifts human intervention from operational tasks like writing scripts to strategic governance and policy definition.

Why Traditional QA and Automation Are Breaking at Scale

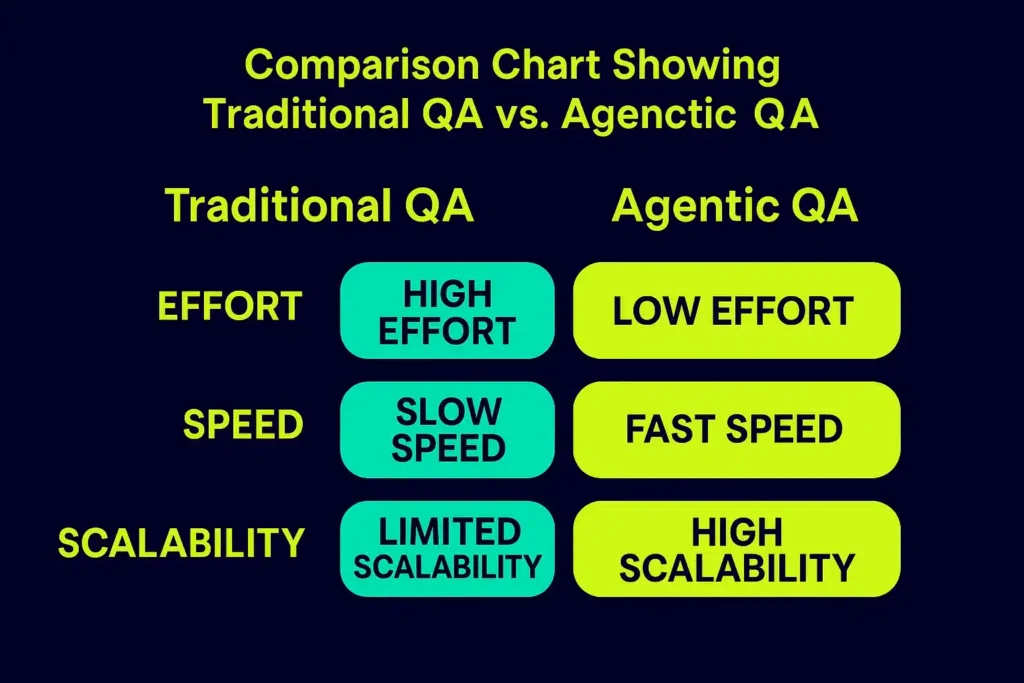

Traditional automation has hit a ceiling. Most enterprises rely on rigid, predefined scripts that crumble the moment a developer changes a UI element. This fragility forces teams into a cycle of constant maintenance. Testers often spend more time fixing old tests than validating new features.

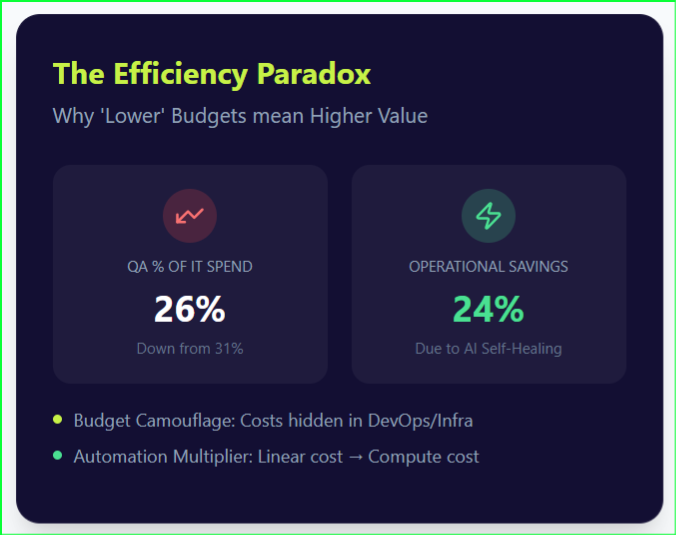

The resulting accumulation of test debt creates a massive bottleneck that cancels out the gains made by high-velocity development teams. Regression suites become harder to maintain at scale, and result analysis often requires manual triaging across disconnected tools. Organizations face significant ROI & Maturity Challenges as they try to scale these legacy systems. Fragmented toolchains lack the unified AI Agent Coordination necessary for modern, cross-system workflows.

The impact is undeniable: slower release cycles and inconsistent user experiences. Teams need Self-Healing Workflows that adapt to environmental changes in real time. Moving to this model can significantly improve testing efficiency and reduce maintenance effort, especially in fast-changing UI environments.

Core Architecture of an Agentic Orchestration Platform

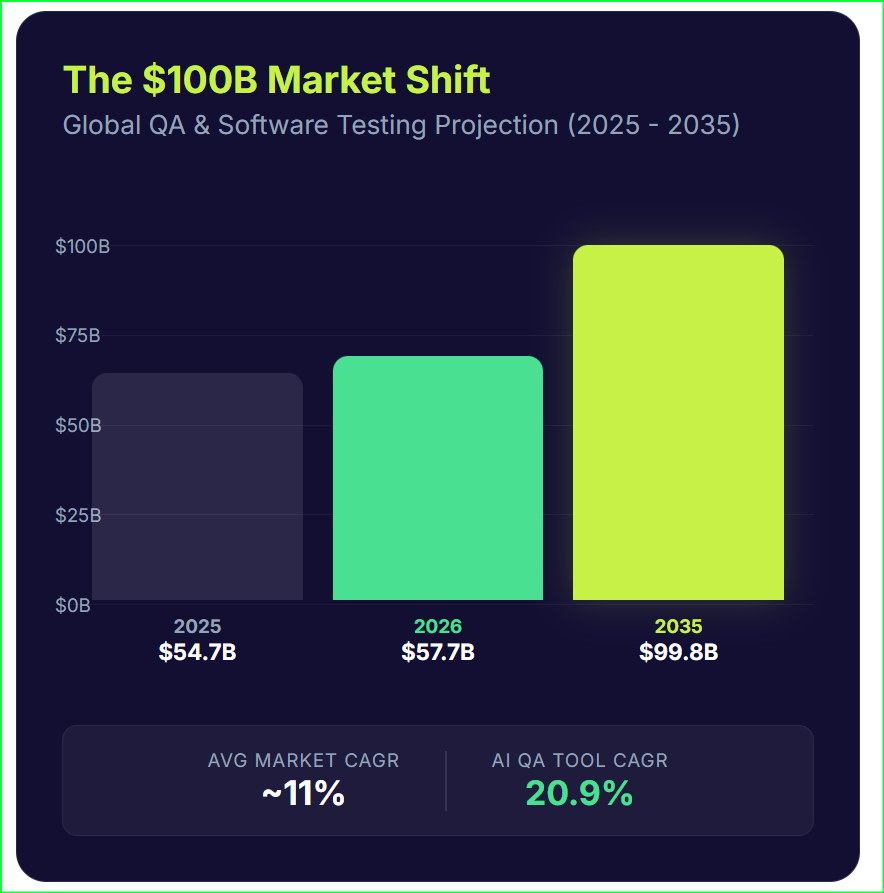

Modern enterprise software needs a structured environment where intelligence can scale. This architectural necessity drives the AI orchestration market toward a projected USD 30.23 billion valuation by 2030 (MarketsandMarkets, 2025).

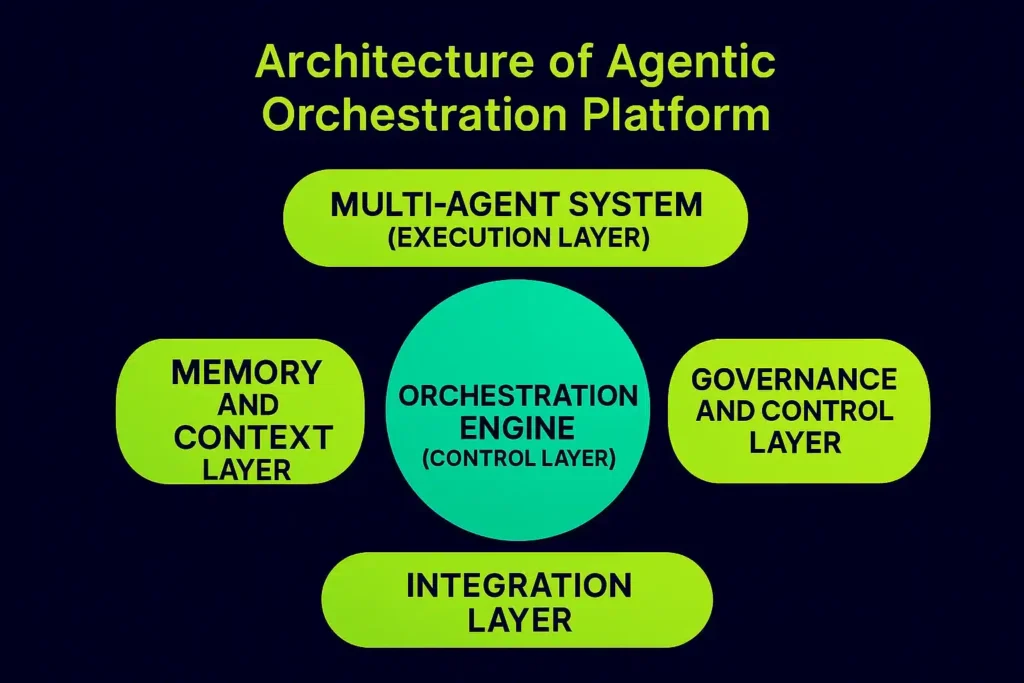

Orchestration Engine (Control Layer)

The Orchestration Engine acts as the central coordinator of all workflows. It processes high-level business objectives and deconstructs them into discrete, executable tasks. Rather than following a linear path, it supports sequential workflows, parallel execution, and event-driven triggers. The engine continuously monitors the execution state, allowing it to adjust workflows dynamically if it encounters environmental shifts.

Multi-Agent System (Execution Layer)

This layer consists of autonomous AI agents with specialized roles. You might deploy UI testing agents to simulate real user interactions or API agents to verify backend microservices. These units collaborate to solve complex, cross-system problems. This enables massive parallel testing across diverse environments.

Memory and Context Layer

Retention separates sophisticated agents from simple automation bots. This layer manages both short-term session data and long-term context retention. By maintaining a history of previous runs and system states, the platform facilitates continuous learning and adaptation. This is particularly critical for long-running workflows where the system must remember the outcomes of early stages to make informed decisions during later validation steps.

Integration Layer

True orchestration requires a connected stack. The integration layer hooks directly into your CI/CD pipelines, including GitHub, Jenkins, and Azure DevOps. It synchronizes data across microservices and legacy enterprise systems, ensuring seamless communication.

Governance and Control Layer

The governance layer defines the rules, policies, and guardrails that keep autonomous agents within enterprise boundaries. It enables human-in-the-loop approvals for high-stakes actions, ensuring traceability and auditability in a production-grade environment.

From Automation to Autonomy: How Agentic Workflows Operate

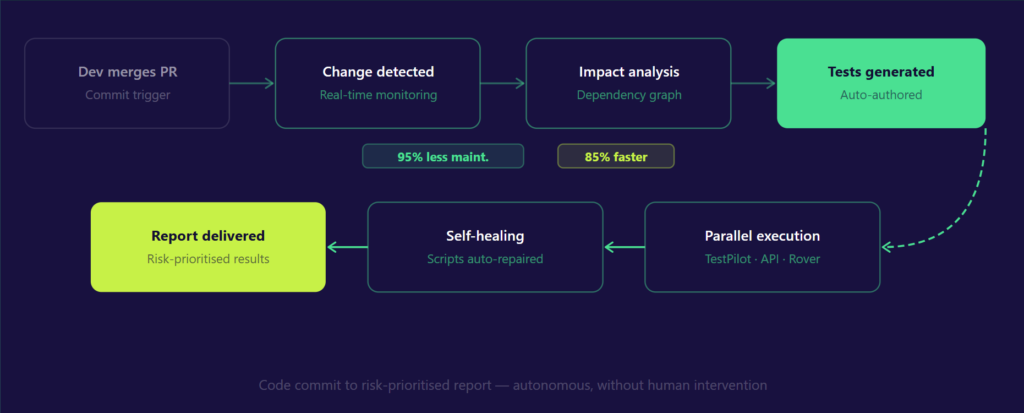

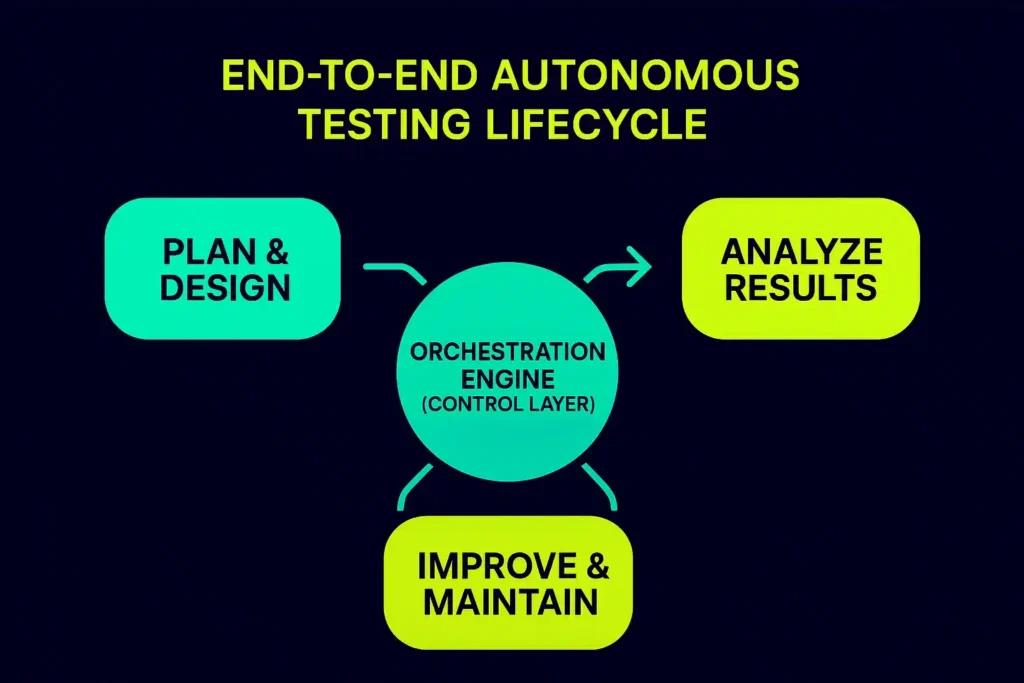

An agentic orchestration platform operates on a continuous loop that starts the moment an event occurs. The workflow begins with the “Sense” phase, where sentinels identify the location of a change. The platform then enters “Cognitive Crunch Time” to perform a deep impact analysis.

Instead of running a full regression suite, the platform determines the “blast radius” of the update. It then dynamically generates only the scenarios required to validate that specific change. If an agent encounters a minor UI shift that does not break functionality, it implements Self-Healing Workflows to update the logic on the fly.

This adaptability can help organizations reduce test maintenance substantially. A continuous feedback loop feeds every result into the system memory. This enables adaptive optimization over time, as the platform learns which testing strategies yield the highest quality with the least effort.

Key Capabilities of a Modern Agentic Orchestration Platform

An agentic orchestration platform turns static quality checks into goal-oriented intelligence. This shift ensures that engineering teams do not sacrifice reliability for speed.

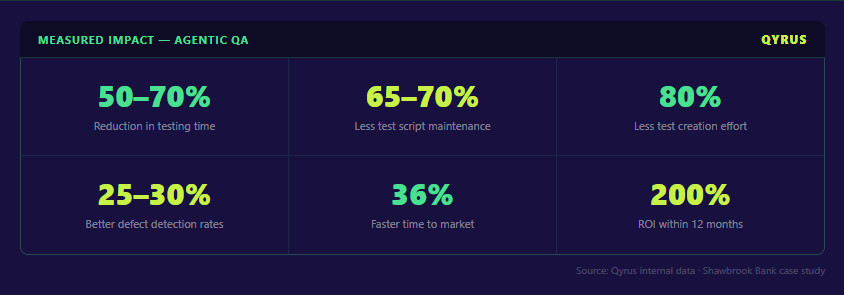

- Autonomous Test Generation: The platform analyzes application blueprints to create comprehensive test suites automatically, often reducing test creation effort significantly for repeatable flows.

- Real-Time Orchestration: The system manages multi-agent coordination across systems and workflows as changes happen, rather than waiting for scheduled runs.

- Intelligent Defect Detection: Agents perform automated root cause analysis to pinpoint the likely source of a break, improving triage speed and consistency.

- Handling Complex Problems & Edge Cases: Autonomous explorers uncover hidden bugs and untested pathways that traditional scripted tests miss.

Business Impact: Eliminating Test Debt and Accelerating Releases

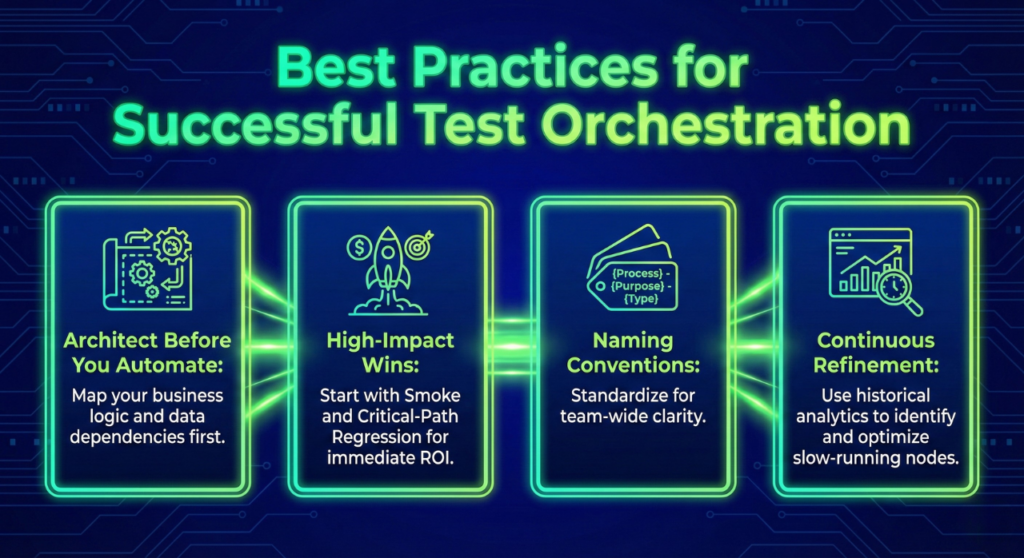

The core value of an agentic orchestration platform lies in crushing the weight of test debt. Organizations often report major reductions in test creation effort because the system generates scenarios from requirements. Self-Healing Workflows allow the platform to adapt to UI changes automatically, resulting in lower maintenance costs and better operational efficiency.

Speed increases through massive parallel testing on cloud infrastructure. This cuts execution time from hours to minutes and significantly reduces release cycles. High-velocity development no longer waits for a manual QA bottleneck. Users experience more stable releases and fewer post-launch incidents. This agility is vital as the AI orchestration sector surges toward its USD 30.23 billion target.

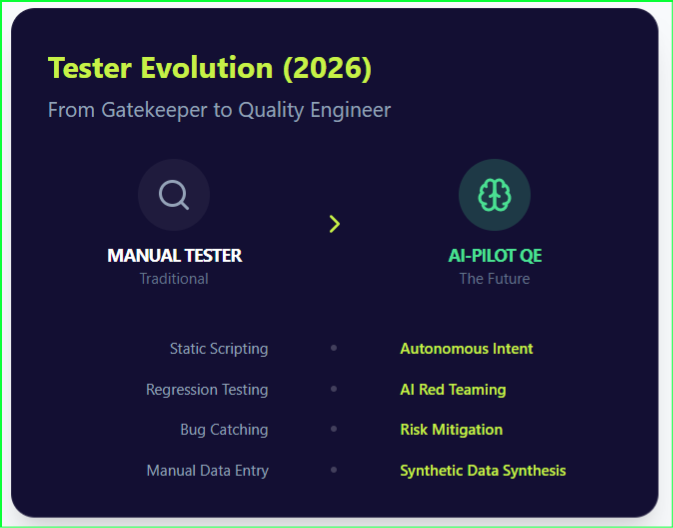

Transforming QA Roles in an Agentic Testing Model

Adopting an agentic orchestration platform redefines daily contributions. The organization shifts toward a model of “testing without manual testing effort,” where humans focus on innovation rather than repetitive tasks.

- Testers: Move from manual execution to strategy, acting as quality architects who define objectives.

- Developers: Receive faster feedback loops, allowing them to fix defects while code context is fresh.

- QA Leaders: Gain unprecedented visibility and control through centralized dashboards and predictive risk analytics.

Challenges in Adopting Agentic Orchestration Platforms

Integration with legacy enterprise systems remains a common hurdle. Connecting to decades-old software requires careful planning and robust middleware. Data shows that legacy integration is a barrier for 60% of AI leaders.

Data governance and security also demand attention. Only 21% of companies currently possess mature AI governance models for autonomous agents (Deloitte, State of AI in the Enterprise, 2026). Managing AI unpredictability is a specific risk factor, as non-deterministic results can impact the reliability of automated checks. Furthermore, infrastructure costs can be significant. Many organizations find that over 40% of their agentic AI projects risk cancellation due to escalating costs, unclear business value, or inadequate risk controls (Gartner, 2025).

The Future of Agentic Orchestration Platforms in QA

The future belongs to more autonomous ecosystems. We are witnessing a convergence where AI platforms and DevOps pipelines merge into a single intelligent fabric. Recent surveys suggest rapid momentum: 62% of respondents report their organizations are at least experimenting with AI agents (McKinsey, 2025), and 74% of companies plan to deploy agentic AI within two years.

The platform will become the operating layer of enterprise QA, using AI-driven decision systems to manage quality. Teams will move from manual oversight to strategic governance. As these workflows become standard, the broader agentic AI market is projected to surge toward USD 199.05 billion by 2034 (Precedence Research, 2025).

The Competitive Landscape: True Orchestration vs. Feature-Led AI

Most enterprise testing platforms now claim AI capabilities. The real distinction lies in execution depth and how a platform handles the entire execution lifecycle.

Qyrus outranks competitors by delivering a true agentic orchestration platform and framework named SEER (Sense-Evaluate-Execute-Report), built around autonomous execution. Its architecture focuses on multi-agent coordination across the entire testing lifecycle, from sensing changes to reporting risk insights. While others offer AI as a feature, Qyrus provides a strategic solution to eliminate test debt.

- UiPath and Tricentis: Offer robust enterprise automation with integrated testing. However, many workflows still rely on predefined logic rather than fully autonomous execution.

- ACCELQ and Functionize: Emphasize AI-assisted testing and generative capabilities. These improve efficiency but often focus on specific layers like UI or API, rather than orchestrating multi-agent systems across the full lifecycle.

The ability to coordinate multiple agents, adapt in real time, and execute without manual intervention determines whether AI becomes an incremental improvement or a foundational capability.

Frequently Asked Questions

- What is an agentic orchestration platform?

An agentic orchestration platform coordinates autonomous AI agents, systems, and workflows to execute complex tasks like testing without manual intervention. It acts as a policy-driven coordination layer that connects human goals to system-level actions. - How is agentic orchestration different from traditional automation?

Traditional automation follows predefined scripts that often break during UI or API changes. Agentic orchestration uses adaptive AI agents to dynamically generate and execute workflows, moving beyond rules-based limitations. - What are multi-agent systems in testing?

They are collections of specialized AI agents that collaborate to perform different testing tasks such as generation, execution, and validation. Each agent focuses on a specific domain like UI, API, or security. - How does agentic orchestration reduce test debt?

By enabling Self-Healing Workflows and adaptive test generation, it minimizes script maintenance and eliminates brittle test cases. This closes the gap between software creation and reliable validation. - Can agentic orchestration integrate with CI/CD pipelines?

Yes, it integrates seamlessly with modern systems like GitHub, Jenkins, and Azure DevOps to enable continuous, automated testing workflows triggered by code commits. - Which industries benefit most from these platforms?

Enterprises across finance, healthcare, telecom, and SaaS benefit most due to their complex workflows and large-scale systems requiring rigorous audit trails.

Conclusion: Moving Toward an Autonomous Quality Future

Agentic orchestration platforms represent a fundamental shift toward true autonomy. They transform quality assurance into a continuous, AI-driven execution layer. This architecture enables intelligent testing across complex systems by replacing manual bottlenecks with governed actions.

The Forrester Wave report recognized Qyrus as a ‘Leader‘ in the autonomous testing market, highlighting its ability to operationalize these advanced agentic workflows at scale. For organizations looking to accelerate releases and eliminate test debt, Qyrus provides the strategic muscle needed for the modern SDLC.

Ready to see it in action? Request a demo to see how Qyrus can help you achieve autonomous, end-to-end testing at enterprise scale.