Automated testing stands as a cornerstone of modern software delivery, yet many organizations find themselves hitting a “scaling wall” where more scripts no longer equal better quality. While many developers now participate in DevOps-related activities, traditional test automation often becomes a bottleneck in high-velocity environments. It focuses on isolated tasks—a single login check or an API call—but fails to account for the complex choreography required by today’s distributed systems.

Enterprises are shifting from monoliths to microservices at a staggering rate, with the average number of applications in an enterprise growing to 957 in a single year. In this environment, running tests in a “fire and forget” fashion leads to fragmented results and massive maintenance overhead. Research shows that 73% of test automation projects fail because they lack a cohesive strategy to manage coordination, visibility, and architectural value.

Test orchestration represents the strategic evolution of quality assurance. It provides the “connective tissue” that manages how, when, and where tests execute across disparate systems. While automation handles the individual tasks, orchestration ensures those tasks run in a controlled, synchronous process that validates entire business workflows. Without this coordination, teams remain trapped in a cycle of manual syncing and environment drift.

The Atomic Unit: What is Test Automation?

Test automation focuses on the execution of individual scripts to verify specific outcomes without human intervention. QA engineers typically use this to handle repetitive, well-defined tasks like regression checks or unit tests. By scripting these steps—such as clicking a button or sending an API call—teams improve consistency and allow for more frequent runs.

Traditional automation relies on specific frameworks and tools:

- Test Scripts: Engineers write code using frameworks like Selenium for web UI, Appium for mobile apps, or JUnit and PyTest for unit-level validation.

- Isolated Execution: Each test generally runs independently or as a simple linear suite triggered by a code commit or a manual prompt.

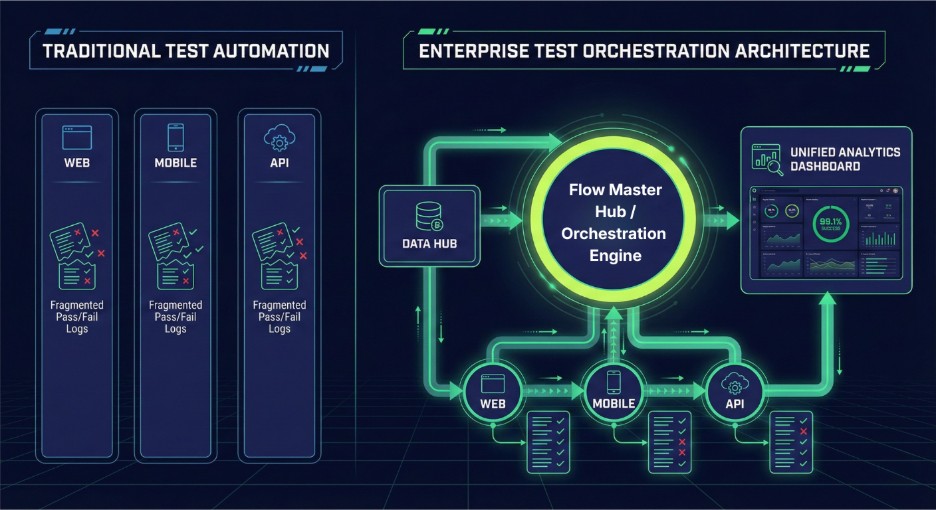

- Individual Reporting: Tools provide pass/fail logs for the specific job at hand, rather than a holistic view of the entire system’s health.

While automation speeds up testing and reduces manual effort, it operates in a vacuum. In complex enterprise automation architecture, these isolated scripts often lead to “maintenance death spirals” where teams spend more time fixing brittle locators than building new features. Global studies indicate that the average level of test automation remains around 44%, meaning more than half of all testing effort is still manual due to these scaling challenges.

The Command Center: What is Test Orchestration?

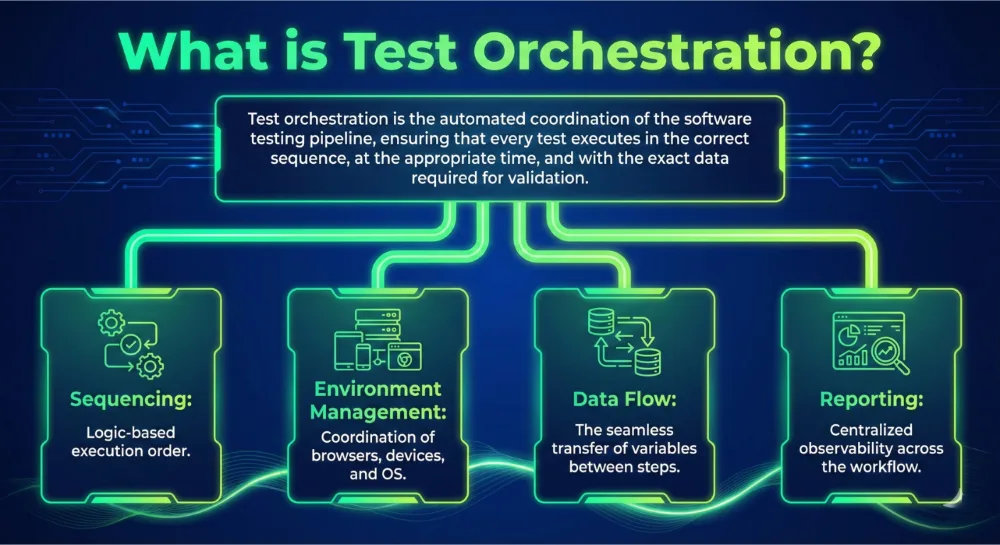

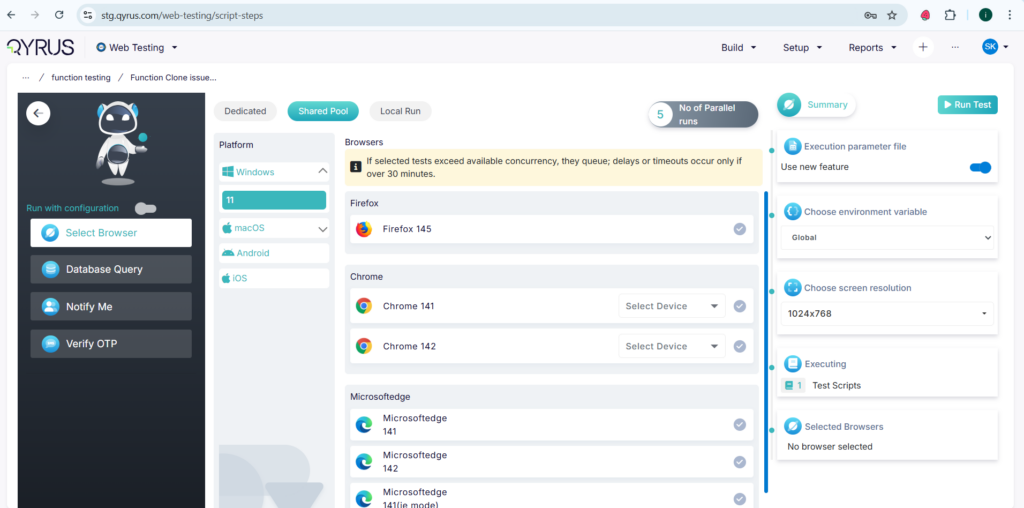

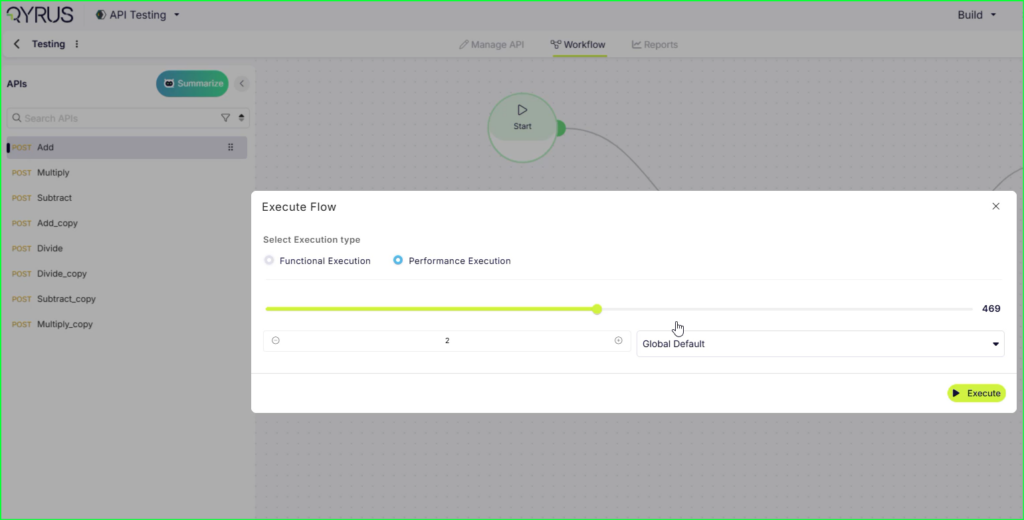

If automation provides the tools to run a test, orchestration provides the process to run hundreds of tests together in a controlled, intelligent sequence. Test orchestration is the automated coordination of your entire testing pipeline, ensuring the right tests run in the right order, in the correct environments, with shared data and unified reporting. It acts as a system-level coordination layer that manages end-to-end workflow orchestration testing across distributed microservices and multi-tier applications.

Key capabilities that define a true orchestration layer include:

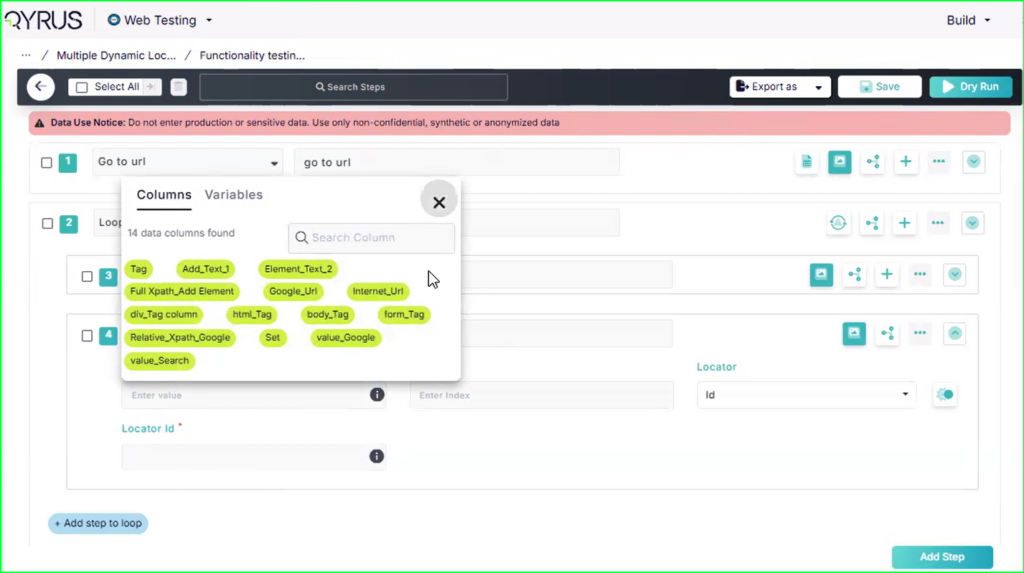

- Intelligent Sequencing and Dependency Management: Orchestration defines complex dependency graphs, allowing teams to model pipelines as specific workflows—such as running service-level tests in parallel only after shared component checks pass.

- Contextual Data Propagation: Unlike siloed scripts, orchestration enables “context data sharing,” where transaction IDs or session tokens generated in one stage automatically flow into the next.

- Environment Coordination: Orchestration engines automatically provision and tear down ephemeral test environments, enforcing consistency to prevent the “environment drift” that plagues manual setups.

- Logical Control and SmartFlow Mapping: Modern orchestration uses action nodes like conditional branching (If/Else), retries for transient failures, and “Stop” actions to halt pipelines on critical errors.

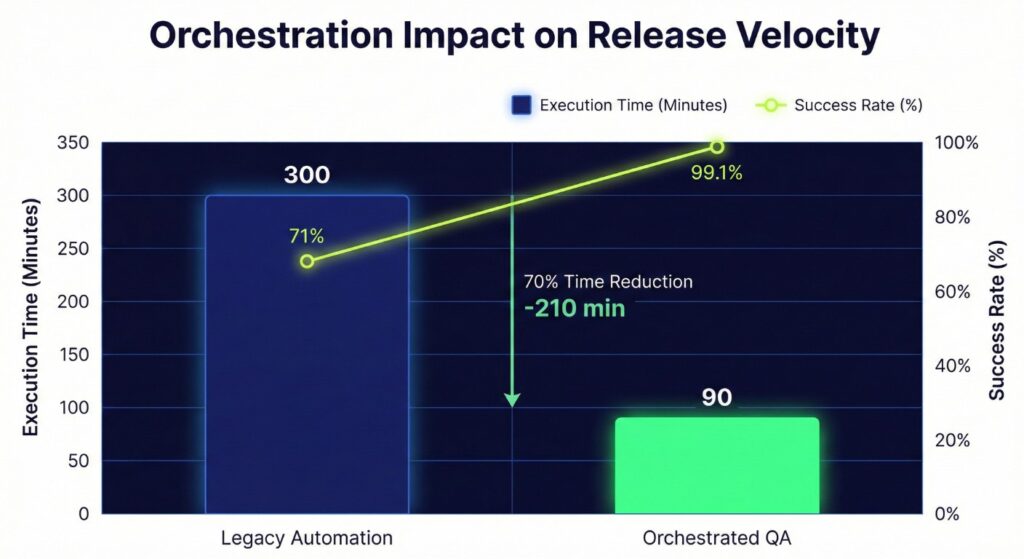

Organizations that move beyond simple triggers to full orchestration see immediate results. Research indicates that 36.5% of organizations still lack any orchestration, leaving them with brittle pipelines and long queues. However, those who implement dedicated orchestration platforms can achieve up to a 90% reduction in execution time by moving from sequential to adaptive parallel execution.

Test Orchestration vs Test Automation: A Strategic Comparison

Understanding the technical boundaries between these two paradigms is essential for building a resilient enterprise automation architecture. While automation answers “how do we execute without manual intervention,” orchestration answers “how do tests run together in a synchronous process”.

The following table breaks down the core functional differences:

Core Functional differences :

| Feature | Test Automation | Test Orchestration |

|---|---|---|

| Scope | Atomic (Single scripts or suites) | Holistic (End-to-end workflows) |

| Data Management | Often hardcoded or siloed per test | Dynamic "Data Hub" & variable propagation |

| Environment Handling | Static, pre-configured environments | Dynamic provisioning and coordination |

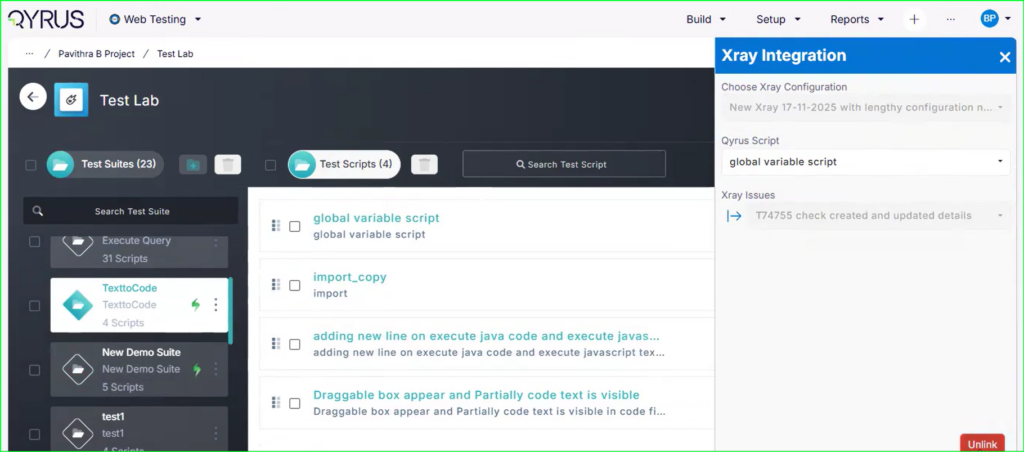

| Integration | Limited to basic CI triggers | Deep CI/CD + cross-platform toolchain |

| Logic | Minimal/Linear | Conditional branching (If/Else, Switch) |

| Decision Making | Manual quality gating often required | Automated conditional progression |

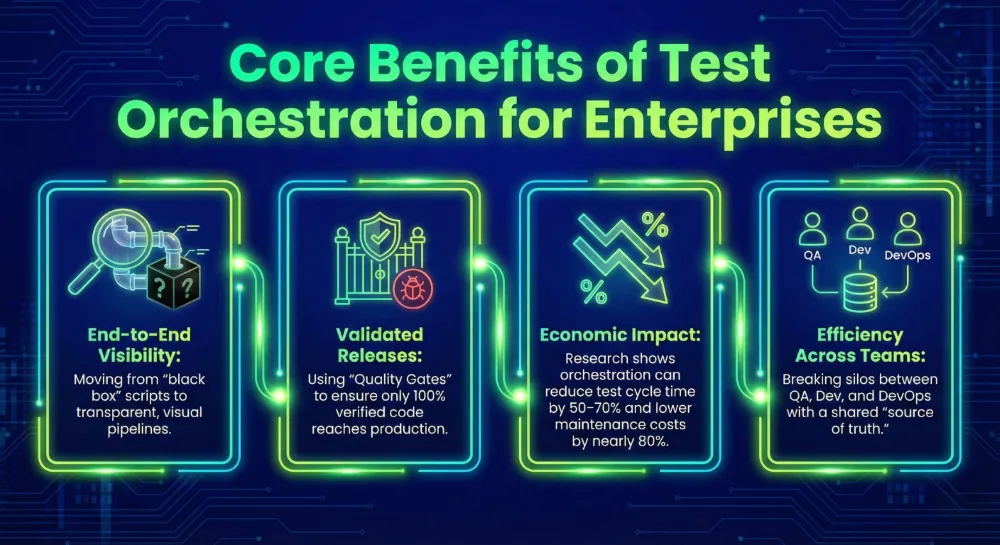

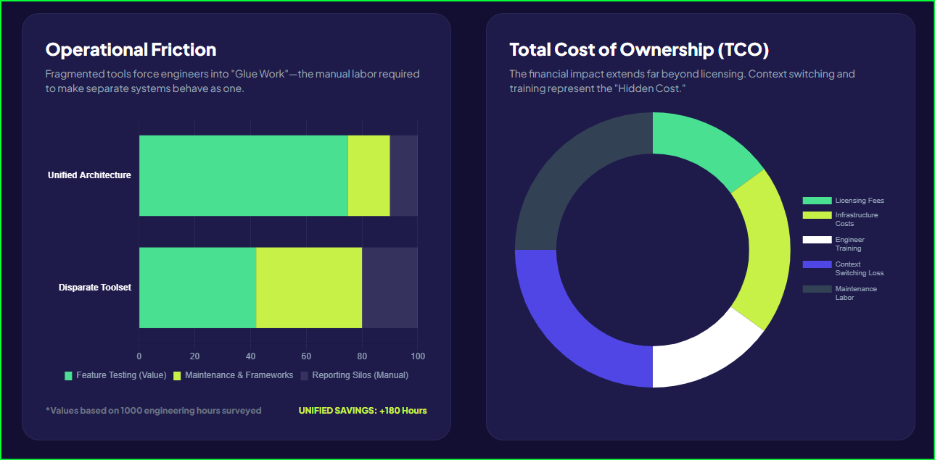

Standalone automation typically generates fragmented pass/fail logs for individual tools. This forces engineers to waste hours daily “hunting for logs” across disparate dashboards to understand why a build failed. Orchestration eliminates this friction by providing centralized observability and aggregated insights. By managing these multi-step flows across components, orchestration ensures that automation provides actual business value rather than just a collection of fragile scripts.

Workflow Orchestration Testing: Beyond Linear Execution

Modern quality assurance requires more than just checking if a single feature works; it necessitates validating how data moves through a complex, multi-system environment. This is where workflow orchestration testing transforms testing from a series of checks into a high-fidelity simulation of user journeys. By focusing on the workflow rather than the isolated test case, teams can validate cross-cutting business logic that spans mobile apps, web interfaces, and backend APIs.

Core concepts that drive this architectural shift include:

- Logical Control Nodes: Orchestration uses specialized actions like Wait for timing synchronization and Retry to handle transient network issues or “flaky” environment states.

- Adaptive Branching: If a critical smoke test fails, the orchestrator can execute “if/else” logic to bypass heavy regression suites, saving significant compute resources and providing faster feedback.

- Parallel and Dependent Stages: Pipelines are modeled as sophisticated graphs where independent services undergo validation simultaneously, while dependent steps wait for clear “pass” signals from upstream components.

This level of coordination is no longer optional for the modern enterprise. Research indicates that up to 30% of failing tests in CI/CD pipelines are actually flaky, often due to environment drift or timing errors that linear automation cannot handle. By implementing orchestration, teams catch failures earlier in the cycle, with studies reporting up to 29.4% higher defect detection in modern API testing environments compared to traditional execution.

Designing a Modern Enterprise Automation Architecture

A high-performing QA stack requires more than a collection of standalone tools; it demands a structured enterprise automation architecture that connects code commits to production deployments. Think of this architecture as a city’s power grid. While automation scripts are the individual appliances, orchestration is the grid itself, managing the distribution of resources and ensuring every component receives what it needs to function.

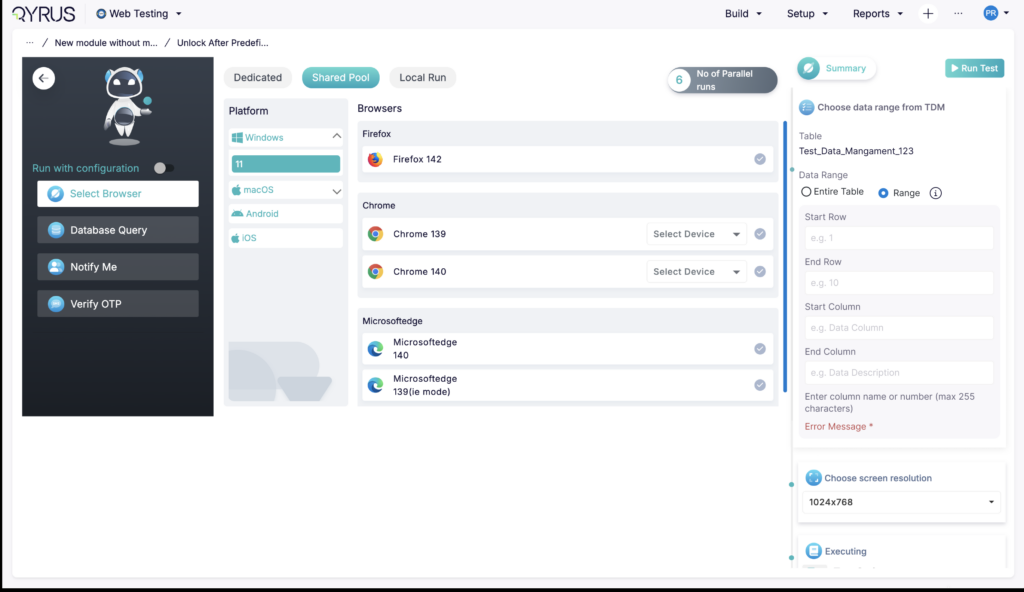

A resilient architecture, like the one provided by Qyrus, typically follows a hierarchical, three-tier structure:

- The Organization/Project Layer: This acts as the administrative foundation where you manage permissions, global variables, and cross-team standards.

- The Workflow Orchestration Layer: Here, teams design the “SmartFlow Mapping” that dictates how tests behave under real-world conditions.

- The Execution and Data Layer: This contains the individual test nodes (Web, Mobile, API, Desktop) and the Data Hub—a centralized repository for persistent data that remains available throughout the execution lifecycle.

Despite the clear benefits, many organizations still struggle to build this connective tissue. By integrating an orchestrator engine directly into the CI/CD pipeline, enterprises transform testing into a proactive “fail-gate” rather than a reactive bottleneck. This architectural shift allows for centralized observability, where every stakeholder sees a unified view of quality rather than hunting through disparate logs.

Navigating the Decision Between Test Orchestration vs Test Automation

Avoid viewing these two concepts as competing alternatives. Instead, treat them as complementary tiers within a modern enterprise automation architecture. Choosing the correct layer for each testing task determines whether your pipeline accelerates delivery or grinds to a halt.

Standard test automation remains the gold standard for verifying isolated functions. Use standalone scripts when you need to validate specific components, such as a single login field or a simple API endpoint response. These scripts are lightweight and provide the rapid feedback developers need during the initial coding phase.

You must pivot to workflow orchestration testing once the scope expands to include multiple systems or complex business logic. Orchestration becomes essential when tests involve dependencies—for instance, when a “Step B” cannot begin until a “Step A” successfully populates a database record.

| Scenario | Best Fit | Primary Reason |

|---|---|---|

| Single component regression | Test Automation | High speed and low complexity for atomic checks. |

| Multi-system user journeys | Test Orchestration | Manages data flow across Web, Mobile, and API. |

| Multi-environment smoke tests | Test Orchestration | Automatically adjusts URLs and credentials per tier. |

| CI/CD "Fail-Gate" reporting | Test Orchestration | Provides the logical controls needed for hands-off releases. |

The choice also impacts your human capital. QA teams that manually manage large automation suites often spend their days troubleshooting environment drift and syncing data across platforms. Research suggests that moving to an orchestrated model can reduce manual QA effort by up to 80%. However, many firms continue to lack this coordination layer, which directly contributes to the 73% failure rate observed in traditional automation-heavy projects.

Real-World Examples: Solving High-Stakes Testing Scenarios

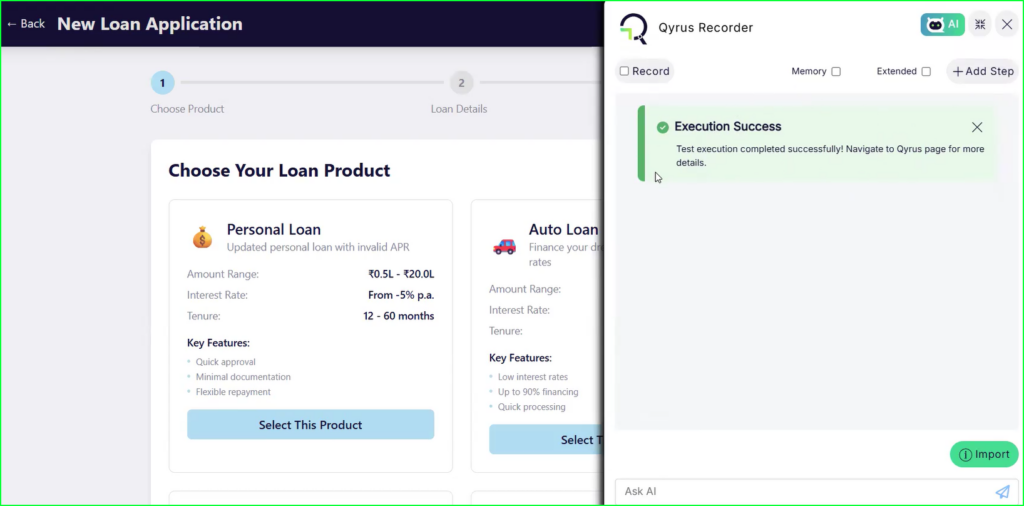

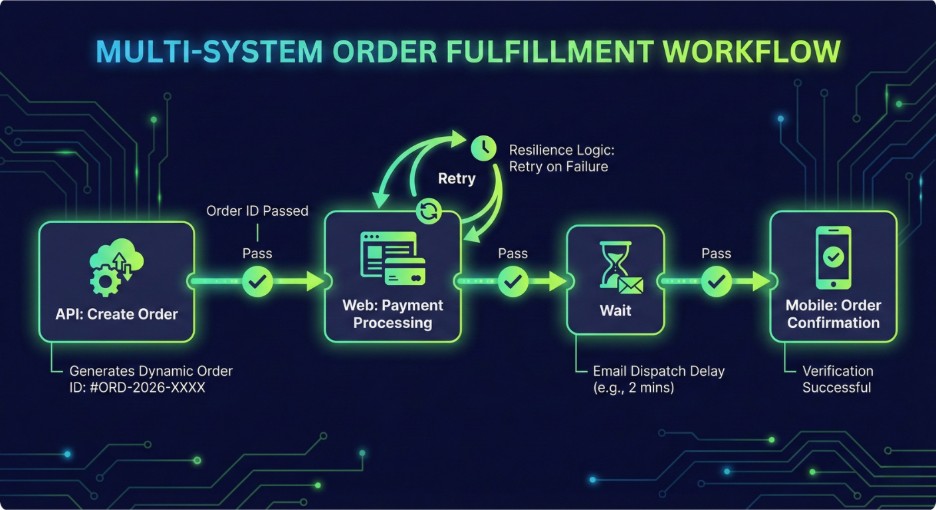

Modern enterprises don’t just ship code; they ship experiences. When a user purchases a product on a mobile app, monitors the shipment on a desktop browser, and receives a real-time email notification, a simple automated script cannot validate the entire journey. This is where workflow orchestration testing replaces fragmented checks with a unified verification process.

Scenario 1: The Multi-System Data Chain

Consider an e-commerce platform where a code update changes the inventory service. In a robust enterprise automation architecture, an orchestrator identifies the change and triggers a choreographed sequence:

- Step 1: API tests create a new order and reserve inventory, capturing a dynamic order_id .

- Step 2: The system propagates this ID to a web test that validates the payment gateway and confirms the transaction.

- Step 3: Finally, a mobile script verifies that the “ready to ship” status appears correctly in the user’s account. This chain ensures data flows correctly between microservices, catching cross-system bugs that isolated scripts would miss.

Scenario 2: Adaptive Resilience in Financial Workflows

Financial transfers require absolute reliability. If a payment processor returns a temporary “Service Unavailable” error, standard automation simply fails and marks the build as “red.” An orchestrated workflow handles this with intelligence:

- Conditional Branching: The system detects the error and triggers a “Retry” action with exponential backoff.

- Fallback Logic: If the retry succeeds, the flow continues. If it fails after three attempts, the orchestrator executes a separate branch to alert the fraud monitoring team and clean up the test data.

The impact of this coordination is measurable. Organizations that move from ad-hoc scripts to orchestrated pipelines report a massive reduction in overall test execution time. Beyond speed, the precision of these workflows drives higher quality.

The Force Multiplier: Maximizing Your Existing Automation Investment

Test orchestration does not replace your current scripts; it makes them work harder. Many organizations mistakenly view the debate of test orchestration vs test automation as a choice between two separate paths. In reality, orchestration preserves and elevates the technical work your team has already completed. By wrapping existing scripts into reusable nodes, you transform isolated code into modular assets that any team member can trigger within a larger sequence.

Implementing this layer within your enterprise automation architecture can have a massive impact on the bottom line.

Transitioning to workflow orchestration testing unlocks the following key benefits:

- Reuse of automation assets in larger pipelines: You can chain together disparate scripts for Web, Mobile, and API platforms into a single, synchronous process. This approach turns standalone code into reusable building blocks that support complex end-to-end journeys.

- Better visibility into failures: Orchestration tools aggregate results and metrics from every stage into unified dashboards. This ends the inefficiency of engineers hunting for logs across different tools to understand why a test failed.

- Reduced redundancy: Automated environment provisioning and centralized data management eliminate manual hand-offs and the risk of environment drift. This coordination allows teams to reduce manual testing effort by 80%.

- Faster feedback loops: Intelligent parallel execution can reduce overall test runtimes by 70%. This acceleration moves testing from a nightly bottleneck to a real-time fail-gate that informs every code commit.

This shift ensures your automation provides actual business value rather than just a collection of fragile, disconnected scripts.

Building a Resilient Future for Quality Engineering

The distinction between test orchestration vs test automation represents the difference between running a tool and managing a strategy. Automation provides the technical means to execute a script, yet orchestration provides the intelligence to govern how those scripts behave within a modern enterprise automation architecture.

Lack of test orchestration forces quality teams to spend more time syncing data than discovering defects. However, enterprises that bridge this gap achieve shorter test cycles and release with up to 99% success. High-performing QA teams no longer view testing as an ad hoc event but as a continuous, synchronous process.

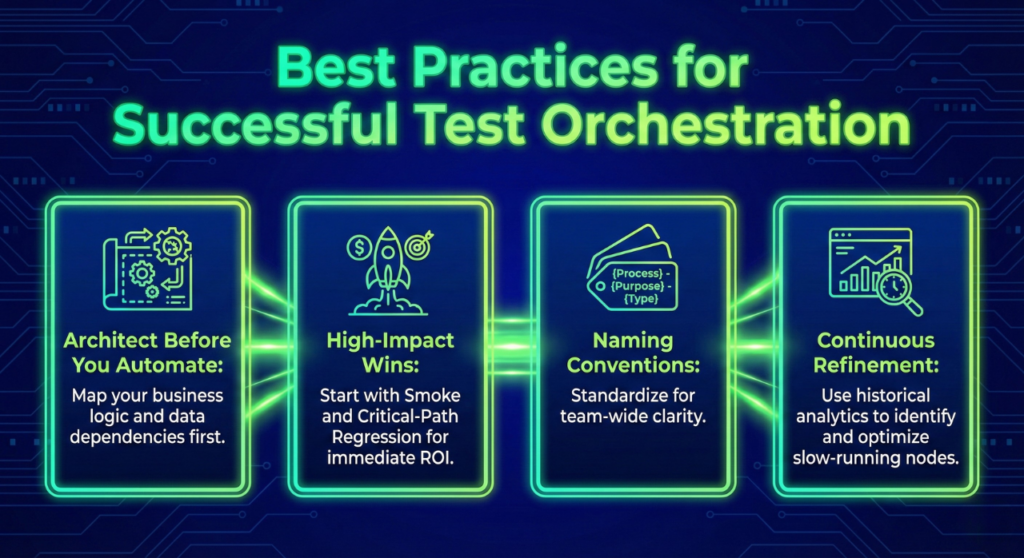

To succeed with workflow orchestration testing, follow these essential best practices:

- Start with a manageable scope: Design your initial workflows with 2–5 nodes to ensure stability before scaling to more complex chains.

- Utilize structural templates: Use proven structural patterns for your workflows to maintain consistency across different teams and projects.

- Prioritize critical user journeys: Focus your orchestration efforts on real-world business processes—such as checkout or onboarding—to see immediate gains in release velocity.

- Automate environment coordination: Eliminate environment drift by using the orchestrator to manage target systems and configurations dynamically.

By moving from isolated execution to automated choreography, you transform your QA department into a driver of business value. You stop reacting to brittle failures and start predicting quality outcomes. Quality demands precision.

See How Qyrus Orchestrates Complex Test Workflows

Frequently Asked Questions

Is orchestration better than automation?

Think of these as complementary layers rather than competing alternatives. Test automation handles the execution of individual scripts to verify specific functions. Test orchestration provides the “connective tissue” that turns isolated scripts into a controlled, synchronous process. It treats testing as an integrated pipeline step, ensuring that your automation serves a wider business goal.

When do you need test orchestration in QA?

Transition to workflow orchestration testing when your application complexity exceeds the limits of standalone scripts. You require it when a single user journey spans multiple protocols, such as an e-commerce order that begins on a mobile app and concludes with a web-based confirmation. It becomes essential when your tests have strict dependencies or require data propagation between steps.

How orchestration improves CI/CD testing?

Orchestration functions as the intelligent engine within a modern enterprise automation architecture. It eliminates the delays caused by manual triggers. By utilizing parallel execution, an orchestrator can slash test suite runtimes by up to 70%, delivering feedback to developers in minutes rather than hours. Furthermore, it provides unified reporting and centralized observability, aggregating metrics from every stage of the pipeline into a single source of truth.