Software quality defines market leadership. QA teams today face a clear choice: continue managing fragmented scripts or switch to an integrated system that handles the entire testing lifecycle. Qyrus Test Orchestration provides this bridge. It allows teams to coordinate complex test scenarios across diverse environments using a visual, no-code interface. By centralizing execution and using AI to handle dynamic conditions, organizations move products from development to release faster than ever.

Current data highlights a significant opportunity for growth. While 83% of developers now work within DevOps environments, 36.5% of firms still lack any form of test orchestration. This gap creates bottlenecks in high-velocity pipelines. Qyrus solves this with a workflow-driven automation platform that ensures every test runs in the right sequence, on the right device, at exactly the right time.

The Strategic Need for Enterprise Test Orchestration Software

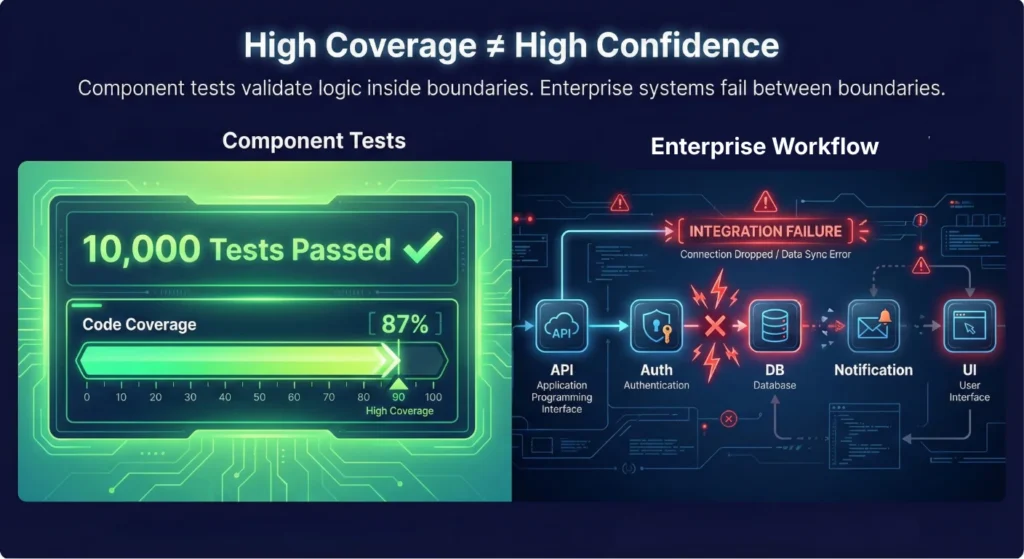

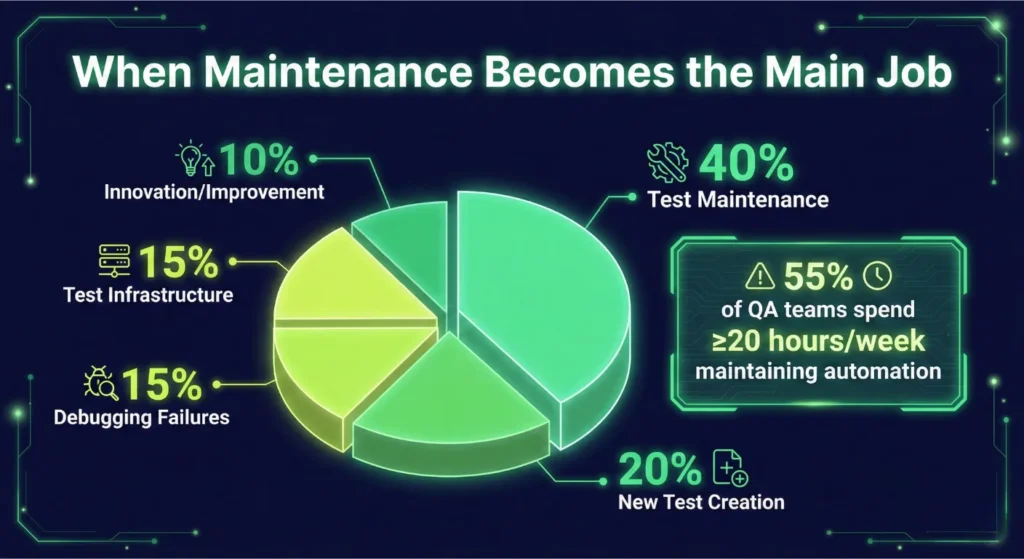

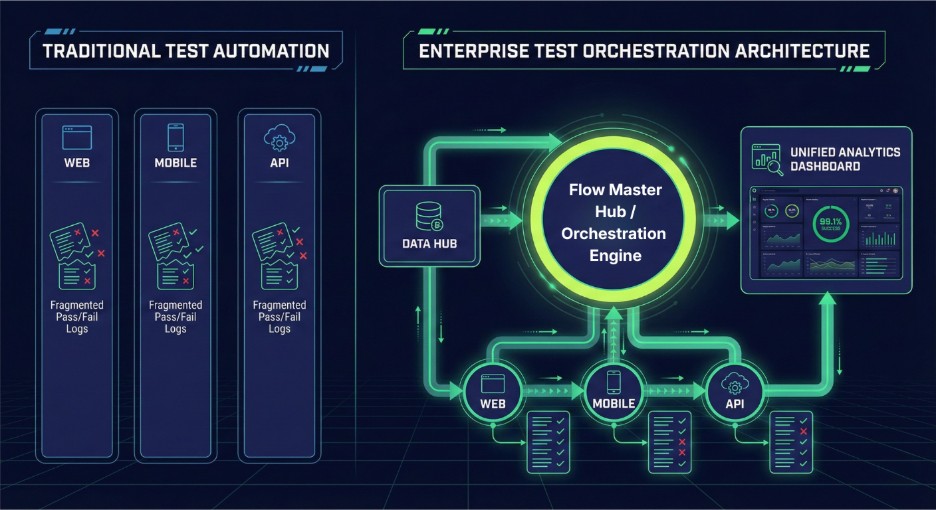

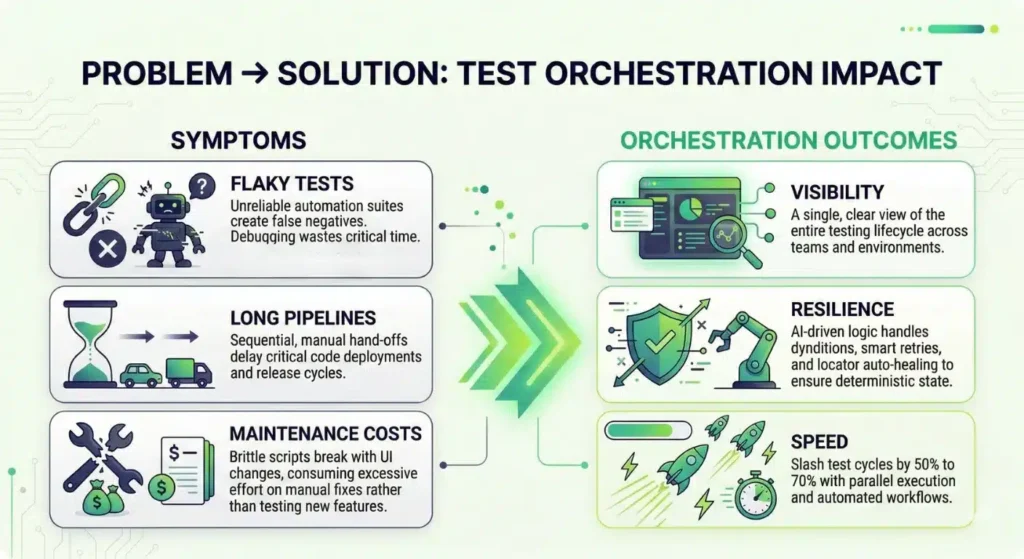

Many organizations struggle with “automation silos.” Teams write scripts for specific features, but these scripts rarely talk to each other. This fragmentation causes major delays. According to a survey, 82% of testers still perform manual or component-level testing daily. Even more concerning, only 45% of teams have automated their standard regression suites. Isolated tests fail to capture how different components interact in the real world.

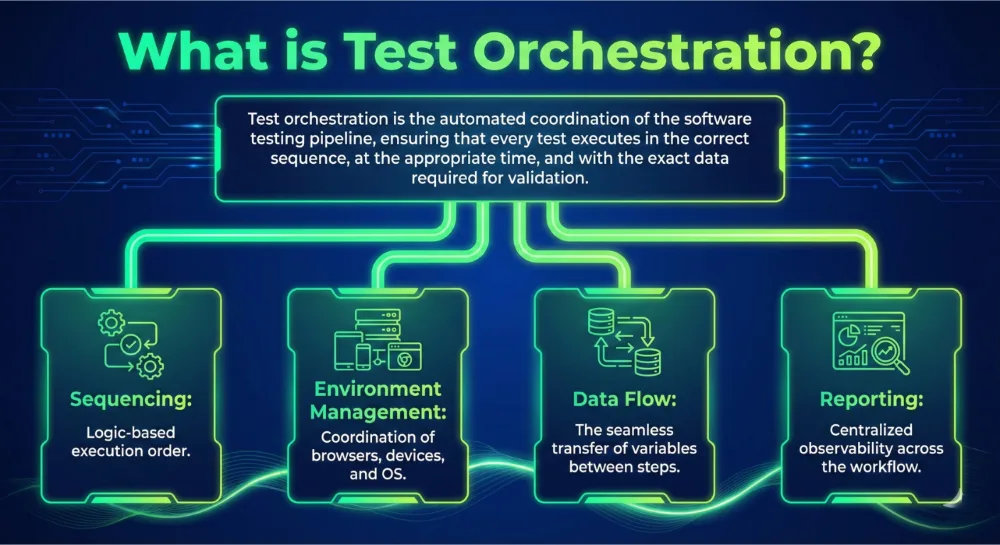

Enterprise test orchestration software moves beyond simple execution. It acts as the brain of your testing strategy. Standard automation tools run scripts; orchestration platforms manage the relationship between those scripts. They handle data dependencies, environment setup, and error recovery automatically.

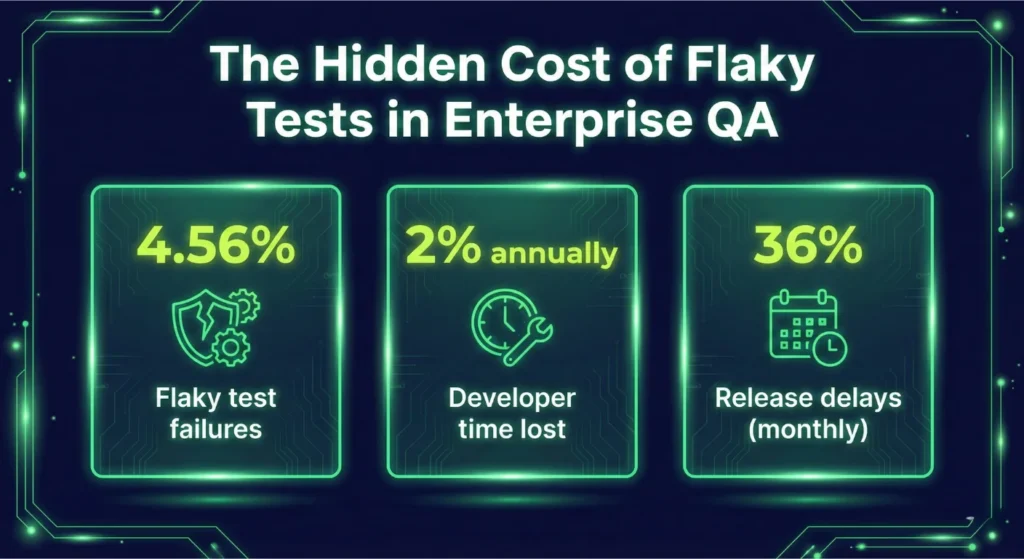

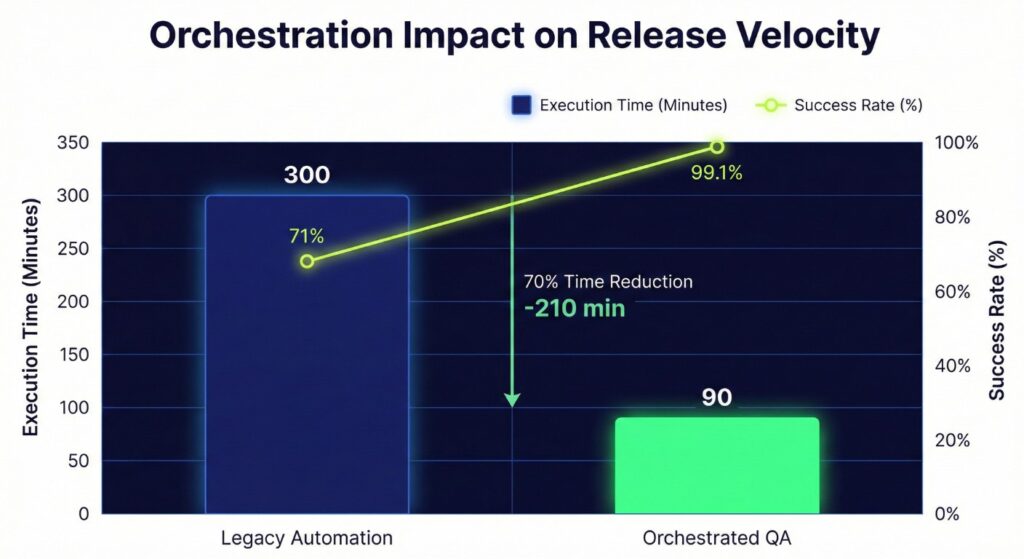

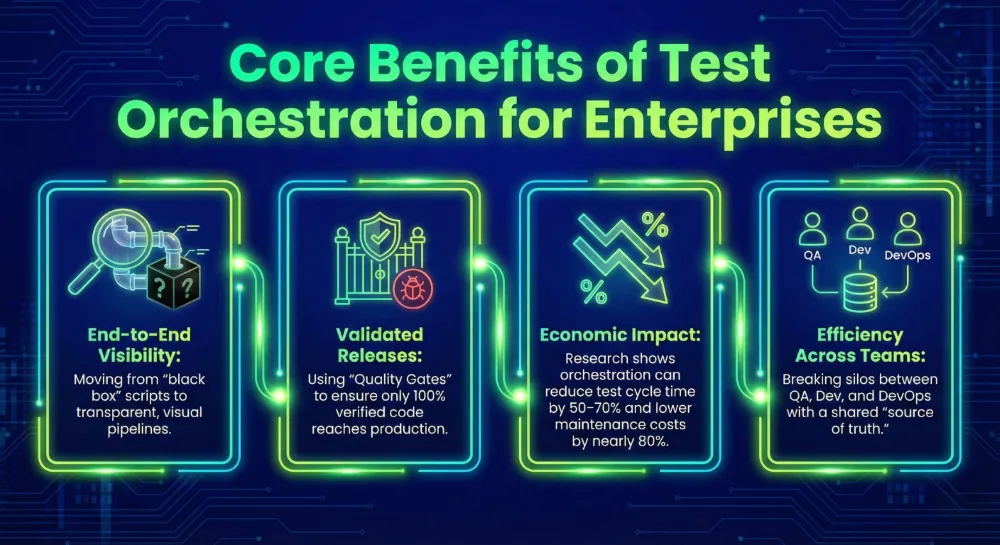

This shift reduces the “flakiness” that plagues most pipelines. When tests fail for non-functional reasons, it wastes developer time and slows down the release cycle. By coordinating the entire flow, orchestration cuts cycle times by 50% to 70% for many teams.

Leaders prioritize orchestration because it lowers the defect escape rate. It creates a safety net that spans the entire software development lifecycle. You no longer hope that your components work together. You prove it. Consistent orchestration ensures that every code change undergoes rigorous validation across every layer of the system.

Qyrus: The Modern Workflow-Driven Automation Platform

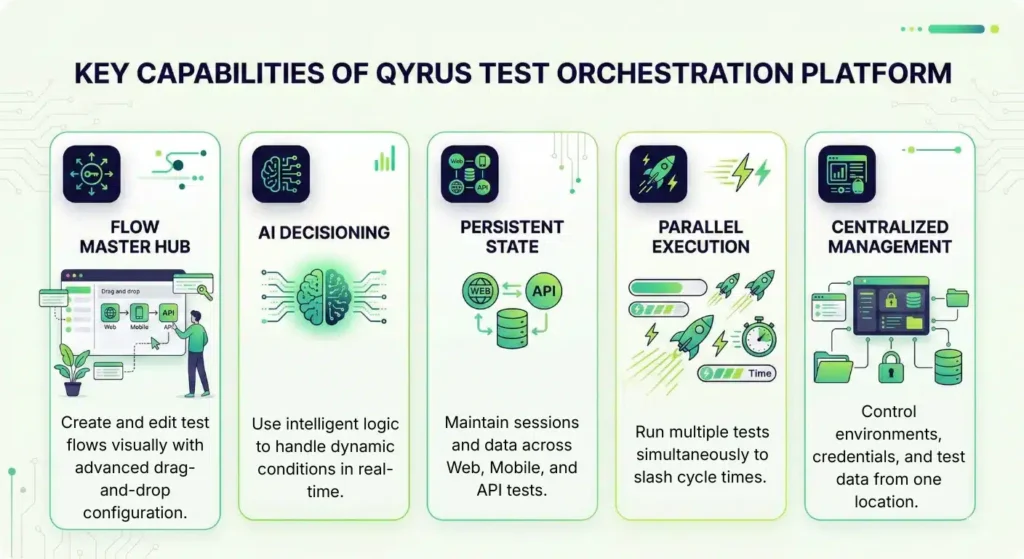

Qyrus transforms testing from a collection of isolated tasks into a cohesive, managed system. It operates as a workflow-driven automation platform that integrates four core pillars: the visual Flow Hub, a centralized Data Hub, a powerful Orchestration Engine, and extensive third-party integrations. This structure allows teams to reduce manual testing efforts by 80% while maintaining total control over the release pipeline. Unlike standard tools that require heavy scripting to manage dependencies, Qyrus uses an AI decision layer to handle complex logic and environment promotion automatically.

Flow Hub: Visual Logic Creation

The Flow Hub serves as the primary workspace for your testing strategy. You drag and drop “Nodes”—individual units representing Web, Mobile, API, or Desktop scripts—and connect them to form a sequence. This visual approach allows QA experts to build sophisticated scenarios without writing a single line of code. Each node contains its own execution settings, allowing you to customize timeouts and skip conditions for every specific step.

Data Hub & State Persistence

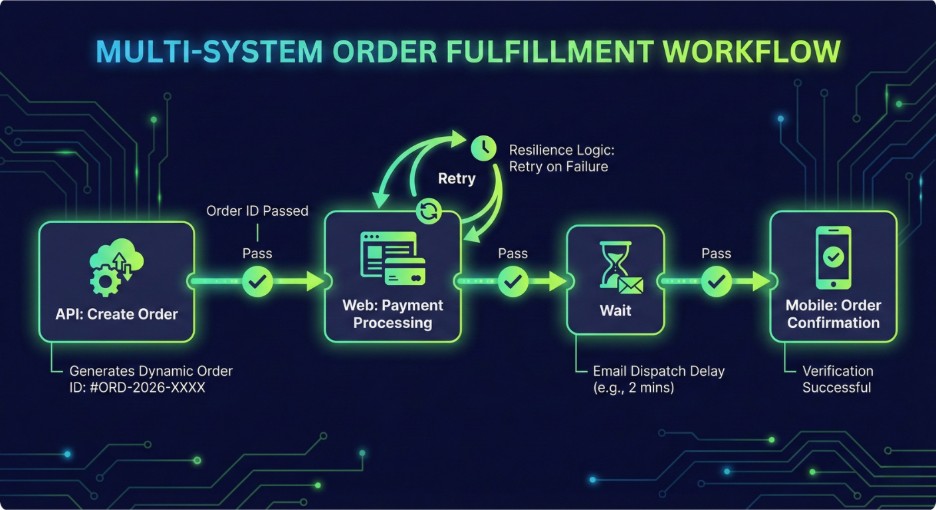

Managing data dependencies often creates the biggest hurdle in automation. Qyrus simplifies this through a centralized Data Hub that supports Global, Workflow, and Step scopes. This ensures that an ID generated in an API test can move seamlessly into a Mobile or Web script. Furthermore, unique session persistence capabilities allow a single browser or device session to remain active across multiple scripts. This prevents the need for constant re-logins and ensures your tests mirror real user behavior.

Resilience Patterns

Flaky environments often derail even the best automation projects. Qyrus counters this with built-in resilience patterns, including “Retry with Backoff” and “Stop” actions. If an API call fails due to network lag, the platform automatically retries the operation using a linear or exponential delay. These patterns act as circuit breakers, preventing a single transient error from failing an entire multi-hour suite and saving your team hours of manual debugging.

Integrations

A platform must fit into your existing ecosystem to provide value. Qyrus connects directly with CI/CD tools and communication platforms like Slack and Microsoft Teams to keep stakeholders informed in real-time. It also supports major cloud providers and various test runners. This connectivity ensures that your orchestrated workflows remain a natural part of your DevOps stack.

Core Features & How They Map to Enterprise Needs

Enterprise testing requires more than just high-speed script execution. Large-scale organizations manage sprawling portfolios of legacy systems and modern microservices that must function in unison. Enterprise test orchestration software bridges this gap by addressing the specific structural failures that cause 73% of automation projects to fail.

Visual Test Flows for Complex Coverage

Most QA teams struggle to automate complex journeys because the underlying code becomes too brittle to maintain. Qyrus solves this through the Flow Hub. You drag and drop test nodes to map out the entire user journey visually. This approach enables teams to achieve higher coverage across multi-platform systems without the technical debt of thousands of lines of custom code.

Conditional Logic for Environment-Aware Testing

Tests often fail because they lack the intelligence to adapt to different environments. Logic control within the platform allows you to define “If-Then” scenarios. For example, a workflow can skip an email verification step in the Development environment but require it in Staging. This environment-aware testing ensures that the same workflow remains valid across the entire release pipeline.

Session Persistence for True E2E Tests

Standard automation tools usually restart the browser or clear the device cache between test scripts. This resets the user state and makes deep end-to-end testing nearly impossible. Qyrus maintains session persistence across Web, Mobile, and API tests. A single login at the start of a workflow carries through every subsequent node, mirroring exactly how a real customer interacts with your brand across different platforms.

Data Hub for Deterministic State

Inconsistent test data causes frequent false negatives. The Data Hub acts as a centralized repository that passes information, such as unique Order IDs or customer tokens, between steps. This ensures a deterministic state throughout the run. When every test uses fresh, accurate data from the previous step, you eliminate the “data pollution” that often breaks shared testing environments.

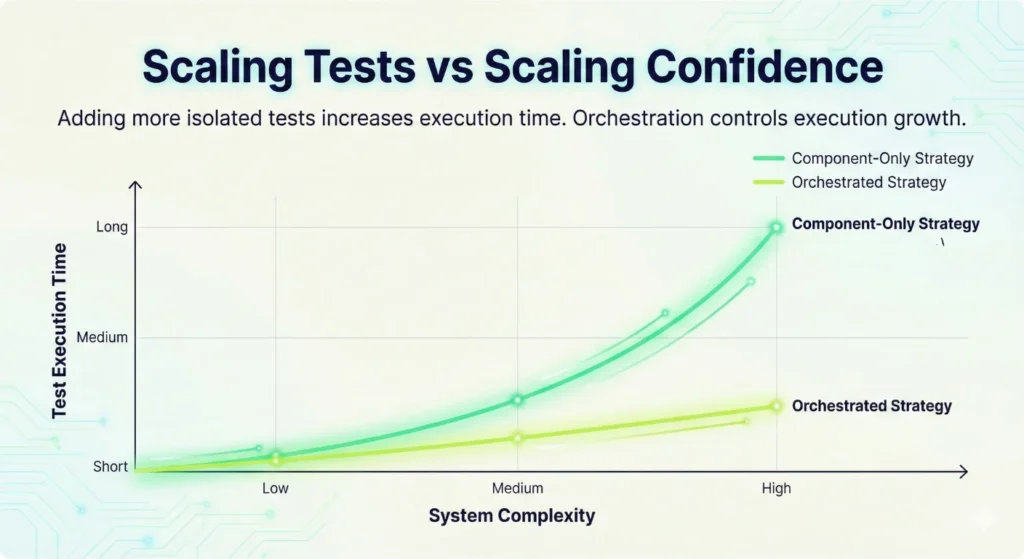

Parallel Nodes for Faster Pipelines

Cycle time remains the primary metric for DevOps success. Orchestration allows you to run independent test nodes in parallel rather than waiting for one to finish before starting the next. This capability significantly slashes execution time, helping teams meet the demand for daily or even hourly releases.

AI Decisioning for Resilient Testing

Flaky tests are a significant drain on resources, often consuming up to 16% of a developer’s time. Qyrus integrates an AI test orchestration platform layer to identify whether a failure is a genuine bug or a transient environment glitch. Smart retries and circuit-breaker patterns allow the system to recover from minor network lags automatically. This ensures your team only investigates real issues, which improves overall execution accuracy and builds trust in the automation suite.

The AI Advantage: Why an AI Test Orchestration Platform Matters

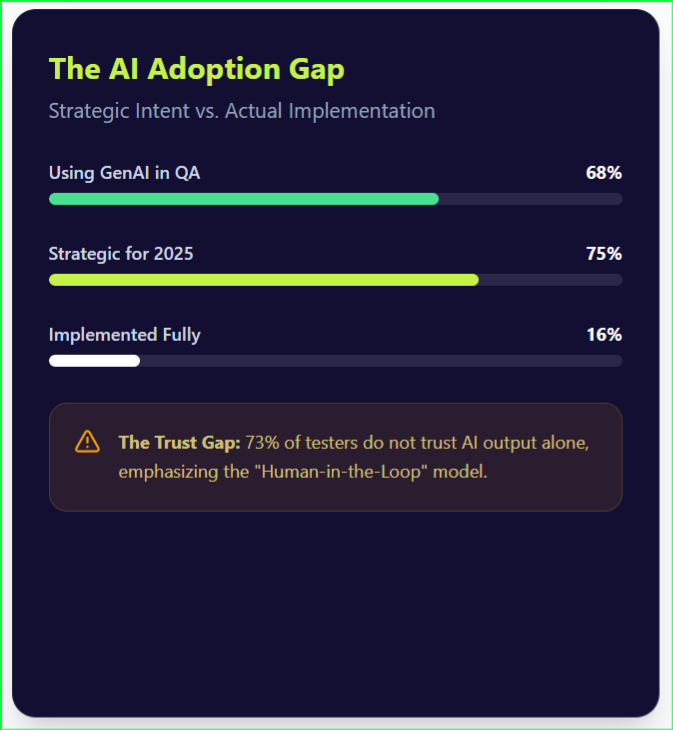

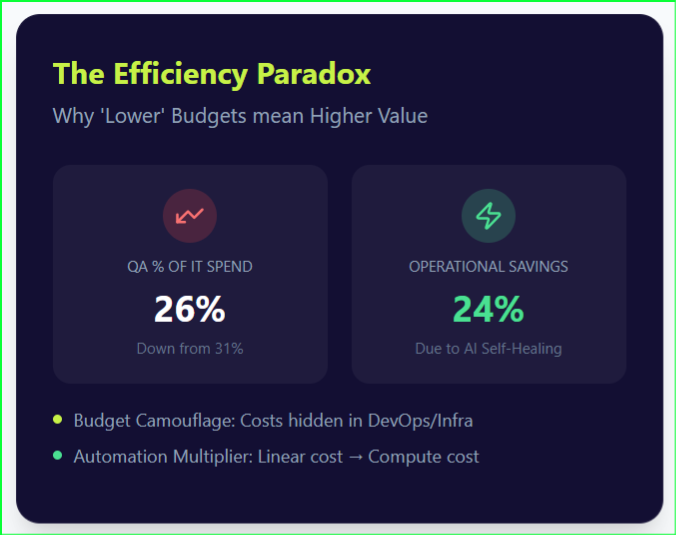

Traditional automation often collapses under the weight of flaky tests. When a locator changes or a network blips, scripts break and require manual fixes. An AI test orchestration platform solves this by introducing “self-healing” capabilities. If the system detects a modified UI element, it automatically updates the locator during execution to prevent a failure. This shift toward intelligence is why 76% of developers now use or plan to use AI tools in their development process.

Smart classification provides the second major advantage. Instead of a generic “failed” report, the platform uses machine learning to categorize the root cause. It distinguishes between a transient environment glitch and a genuine code regression. This clarity allows teams to reduce triage time by up to 35%. You no longer waste hours investigating “ghost” failures that fix themselves on a rerun.

Intelligence also optimizes how you run your tests. The platform analyzes historical data to prioritize high-risk areas. If a specific microservice fails frequently, the AI places those tests at the front of the queue. While the system handles these complex decisions, human oversight remains vital. The platform provides “Confidence Scores” for every automated decision, allowing QA leads to verify and approve major structural changes. This collaboration ensures that speed never comes at the cost of accuracy.

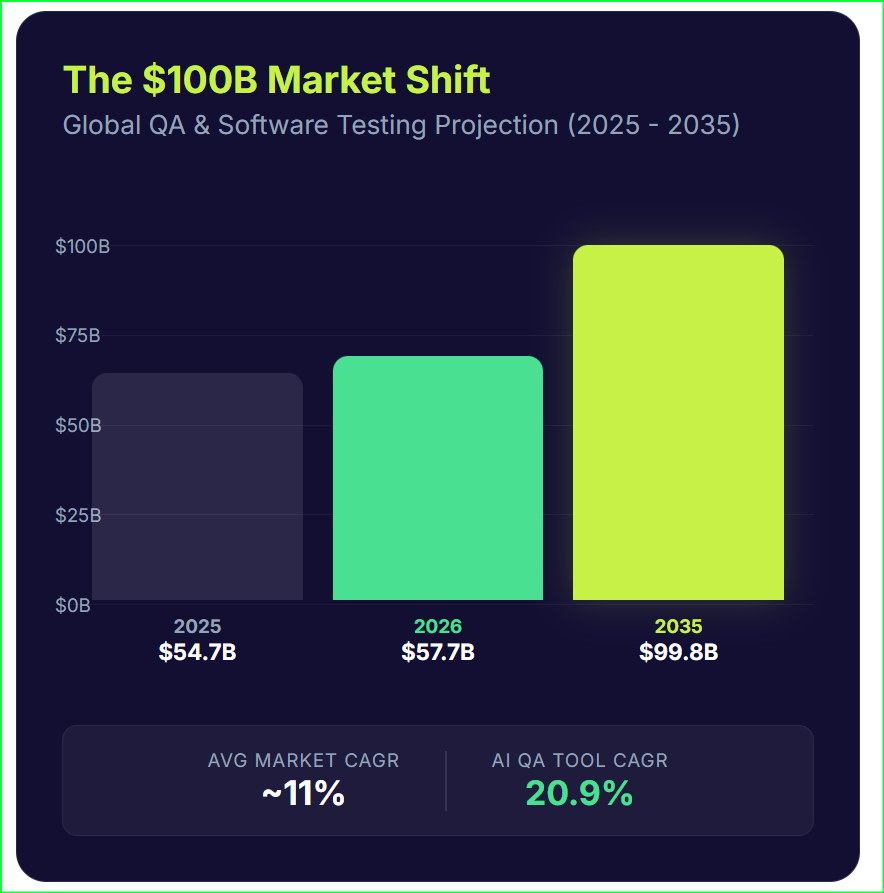

The market reflects this move toward smarter systems. MarketsandMarkets expects the AI in software testing market to grow at a CAGR of 22.3% through 2032. By letting AI handle the routine repairs, your engineers can focus on designing better user experiences.

Visual suggestion

- Flow with AI decision node: show a node that uses AI confidence to choose retry vs fallback.

- Placement: next to the AI section

Typical Enterprise Use Cases & Playbooks

Enterprise teams don’t just test features; they test business outcomes. A single user action often triggers a complex chain reaction across dozens of services, internal APIs, and legacy databases. Manually triggering these tests or relying on loosely coupled scripts leads to “blind spots” where integration failures hide. Orchestration provides a structured playbook for these high-stakes scenarios.

Release Smoke + Regression Across 40 Microservices

Large-scale applications now rely on hundreds of independent services. When a developer updates one microservice, you must validate how it interacts with the rest of the dependency graph. A workflow-driven automation platform allows you to chain contract tests, API mocks, and UI smoke tests into a single, synchronized flow.

This coordinated approach helps companies achieve shorter test cycles by eliminating manual hand-offs between infrastructure and QA teams.

The Resilient Payment Journey

A standard checkout involves a UI interaction, an API call to a payment gateway, a ledger update, and a final customer notification. If the ledger update fails, the system shouldn’t just stop. Qyrus uses “circuit breaker” and “rollback compensation” patterns to manage these failures.

If a critical step fails, the orchestrator can automatically trigger a compensating transaction or send an immediate high-priority alert to the DevOps team. This ensures that a failure in one layer doesn’t leave the system in an inconsistent state or corrupt customer data.

Cross-Platform Continuity with Session Persistence

Modern customers often start a journey on a mobile app and finish it on a desktop browser. Traditionally, testing this required two separate scripts with no shared data or session history. Enterprise test orchestration software changes this through session persistence.

The orchestrator keeps the user logged in as the test moves from a mobile device to a web browser or a desktop application. This validates the true end-to-end experience and catches state-sync issues that isolated tests miss. By testing the way customers actually behave, you catch defects that usually escape to production.

Security, Compliance & Enterprise Governance

Enterprises in highly regulated sectors like finance and healthcare cannot compromise on data integrity. While cloud adoption grows, 90% of organizations will maintain hybrid cloud deployments through 2027 to meet strict residency and security requirements. Enterprise test orchestration software must provide the same level of control as the production environments it validates. A single data breach now costs companies an average of $4.4 million, and regulatory fines under frameworks like GDPR can reach 4% of global annual turnover.

Governance and Data Control

A workflow-driven automation platform acts as a secure vault for your testing assets. Qyrus handles sensitive information through dedicated credential management, ensuring that API keys and passwords never appear in plain text within test scripts. Role-Based Access Control (RBAC) limits visibility, so only authorized personnel can view or edit critical workflows in production-level environments. This prevents unauthorized changes and protects sensitive system configurations.

Auditability and Segregation

Regulated industries require a clear paper trail for every code change. The platform maintains detailed audit trails and activity logs that track who executed a test, what parameters they used, and when the run occurred. This transparency simplifies compliance audits and internal reviews.

Furthermore, environment segregation prevents accidental cross-contamination between development, staging, and production tiers. By using data masking, teams can run realistic tests without exposing actual Personally Identifiable Information (PII) to the QA environment. This approach maintains the high standards of an AI test orchestration platform while protecting the organization from legal and financial risk.

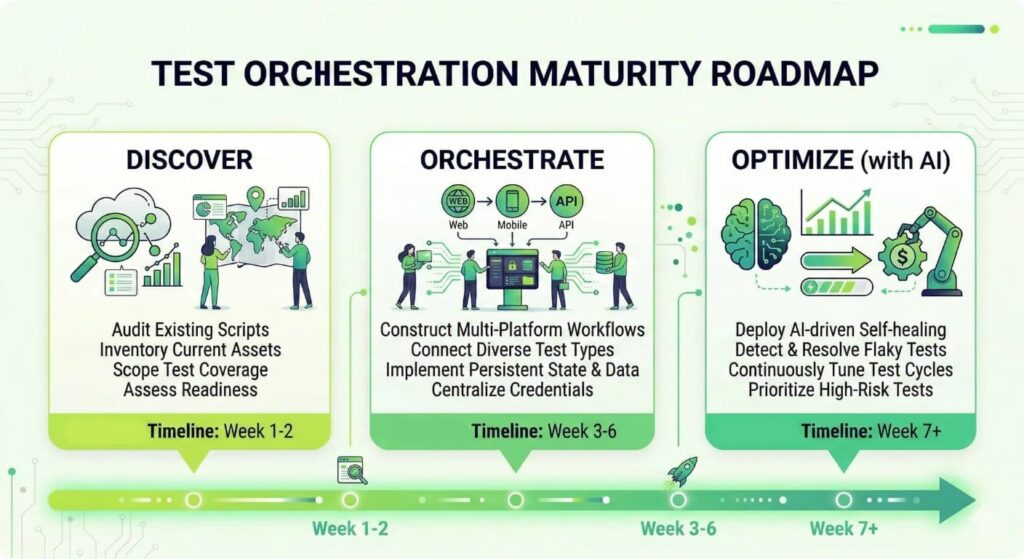

Migration Path: From Component Tests to Orchestrated Workflows

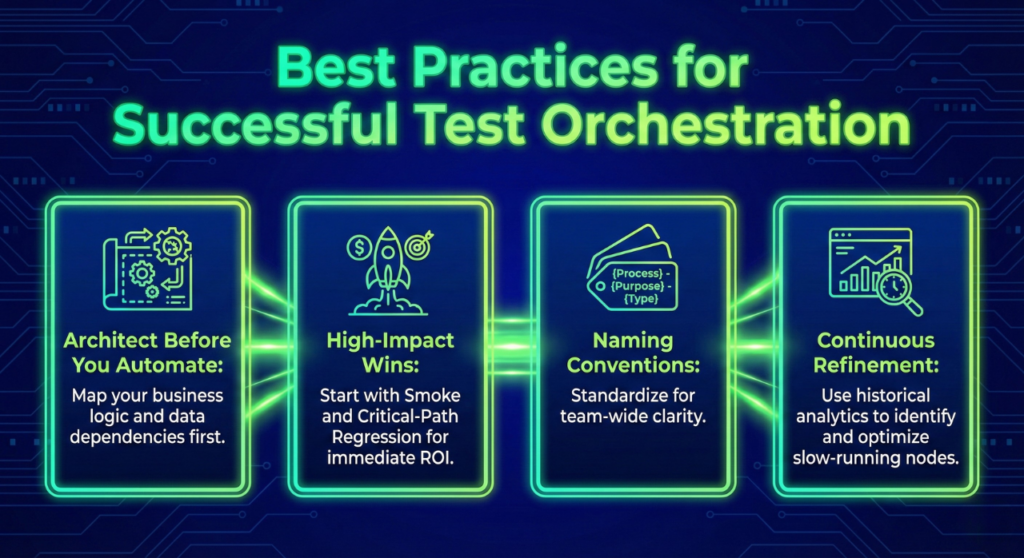

Transitioning from fragmented component testing to a structured workflow-driven automation platform requires a tactical, phased approach. Organizations cannot simply lift and shift every script overnight without creating technical debt. A successful migration moves through four distinct stages to ensure stability and immediate value.

Stage 1: Inventory and Audit

Begin by auditing your existing library of unit and functional scripts. Identify which tests provide the most value and which have become redundant or “flaky.” Statistics show that flaky tests consume up to 16% of a developer’s time, so this is the perfect moment to prune low-quality assets. Categorize your scripts by their role in the user journey to prepare them for the Flow Hub.

Stage 2: Quick Wins with Smoke Workflows

Do not attempt to orchestrate your entire regression suite on day one. Instead, focus on “quick wins” by building automated smoke tests for your most critical paths. Qyrus provides templates for login and session validation that allow teams to get up and running in just 1-2 hours. These high-visibility workflows demonstrate immediate ROI and build team confidence in the new system.

Stage 3: Expanding Orchestrated Flows

Once your smoke tests are stable, begin connecting more complex nodes. This stage involves using the Data Hub to pass information between Web, Mobile, and API scripts. Use session persistence to maintain a single user state across these platforms. Most enterprises find that coordinating these multi-component systems results in 50% to 70% shorter test cycles compared to their old manual hand-off processes.

Stage 4: Optimize with an AI Test Orchestration Platform

The final stage involves layering intelligence over your workflows. Enable smart retries and “retry with backoff” patterns to handle transient environment issues automatically. As the system gathers data, use the AI test orchestration platform capabilities to identify failure patterns and suggest locator fixes. This maturity level allows your team to stop “firefighting” and start focusing on strategic quality engineering.

Migration Best Practices and Pitfalls

Avoid the common pitfall of 1-to-1 script migration. Simply running an old script inside a new container does not capture the benefits of orchestration. Instead, re-think how those scripts should interact. Qyrus minimizes the technical burden by offering a managed migration process that typically requires only a 2-day downtime window to move all existing web scripts from old component services to the core orchestration engine.

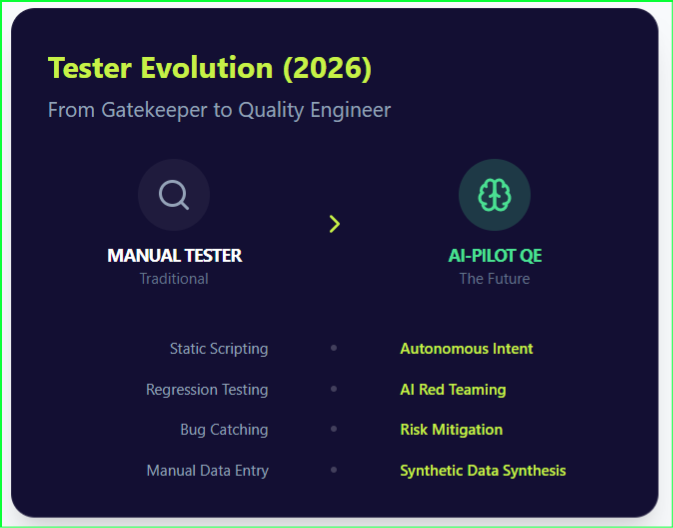

Quality Engineering: From Managing Scripts to Governing Systems

Quality engineering moves from managing scripts to governing systems. Modern delivery pipelines demand more than isolated checks. They require a coordinated, intelligent strategy. Adopting enterprise test orchestration software allows your team to connect Web, Mobile, and API tests into one seamless journey. This shift removes the bottlenecks that prevent high-velocity releases.

The financial and operational benefits remain high across all industries. Teams using a workflow-driven automation platform report shorter test cycles, lower maintenance costs, and reduced manual testing efforts. These improvements ensure your engineers spend their time building features rather than repairing brittle scripts. Early adoption provides a clear market advantage. Orchestration gives you the stability needed to release with absolute confidence.

Take control of your testing lifecycle today with a demo of Qyrus Test Orchestration.